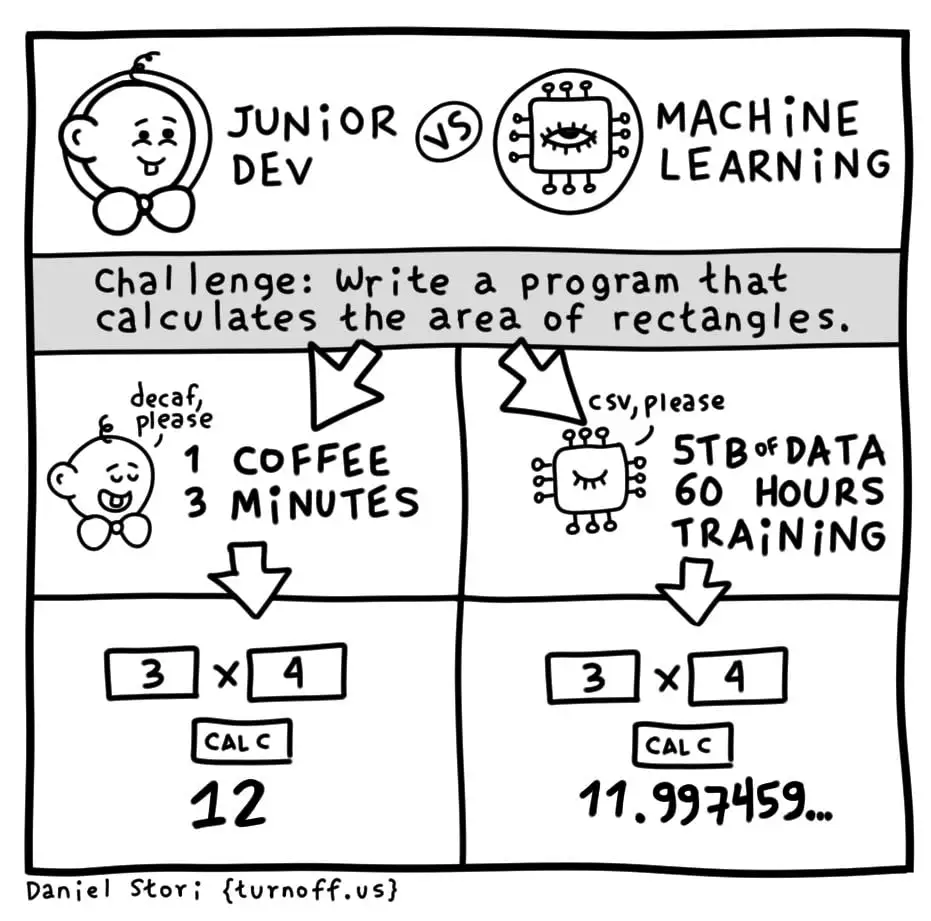

Junior Dev VS Machine Learning

Junior Dev VS Machine Learning

Junior Dev VS Machine Learning

This is so unrealistic. Developers don't drink decaf.

regardless of experience, that's probably what makes him a junior

I do, exclusively

Getting rid of caffeine (decaf still has a little) has been amazing for me.

I’m trying to switch to non-alcoholic vodka.

How so? I more than likely take in too much caffeine lol

I was shocked and appalled by this blatant inaccuracy.

Not the same, but I switched to tea mostly for aesthetic reasons, and after a brief adjustment period, I'm finding it a lot more fun an varied than coffee drinking. And easier to find v low caffeine, or tasty 0 caffeine teas of as many varieties as you can imagine.

I'll still have a social coffee every now and then, but anyway I'd recommend it, at least to check out. It's like discovering scotch after a lifetime of beer drinking.

Try eplaining tea to others though.

Every time I am on-site I get asked for two options: Coffee or water.

And LLMs don't get on the correct answer.

I think this comic might predate the LLM craze.

This post is not specifically about LLMs, though?

DECaf is a pseudo abbreviation for Dangerously and Extraordinarily Caffeinated.

It has a higher KDR than a Panera charged lemonade.

Glucose dev here.

Agreed. If you need to calculate rectangles ML is not the right tool. Now do the comparison for an image identifying program.

If anyone's looking for the magic dividing line, ML is a very inefficient way to do anything; but, it doesn't require us to actually solve the problem, just have a bunch of examples. For very hard but commonplace problems this is still revolutionary.

I think the joke is that the Jr. Developer sits there looking at the screen, a picture of a cat appears, and the Jr. Developer types "cat" on the keyboard then presses enter. Boom, AI in action!

The truth behind the joke is that many companies selling "AI" have lots of humans doing tasks like this behind the scene. "AI" is more likely to get VC money though, so it's "AI", I promise.

It's also Blockchain and uses quantum computers somehow. /s

The correct tool for calculating the area of a rectangle is an elementary school kid who really wants that A.

Exactly. Explaining to a computer what a photo of a dog looks like is super hard. Every rule you can come up with has exceptions or edge cases. But if you show it millions of dog pictures and millions of not-dog pictures it can do a pretty decent job of figuring it out when given a new image it hasn't seen before.

Another problem is people using LLM like it's some form of general ML.

I think it's still faster than actual solutions in some cases, I've seen someone train an ML model to animate a cloak in a way that looks realistic based on an existing physics simulation of it and it cut the processing time down to a fraction

I suppose that's more because it's not doing a full physics simulation it's just parroting the cloak-specific physics it observed but still

I suppose that’s more because it’s not doing a full physics simulation it’s just parroting the cloak-specific physics it observed but still

This. I'm sure to a sufficiently intelligent observer it would still look wrong. You could probably achieve the same thing with a conventional algorithm, it's just that we haven't come up with a way to profitably exploit our limited perception quite as well as the ML does.

In the same vein, one of the big things I'm waiting on is somebody making a NN pixel shader. Even a modest network can achieve a photorealistic look very easily.

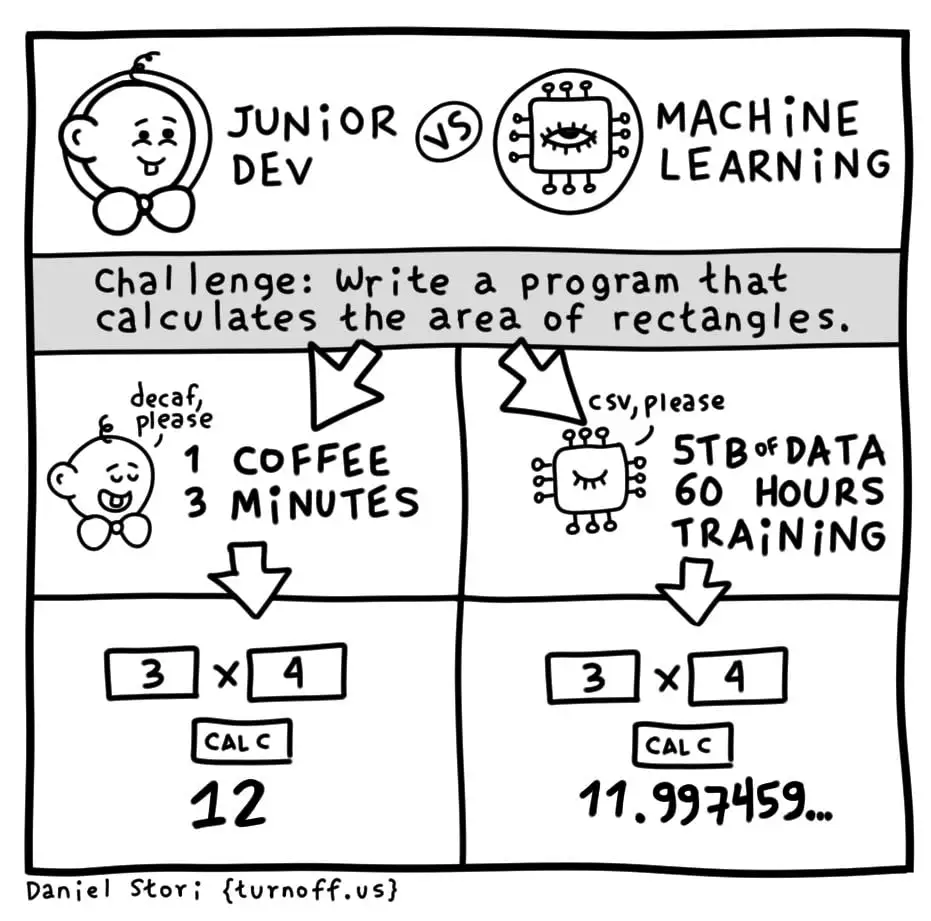

What ChatGPT actually comes up with in about 3 mins.

the comic is about using a machine learning algorithm instead of a hand-coded algorithm. not about using chatGPT to write a trivial program that no doubt exists a thousand times in the data it was trained on.

The strengths of Machine Learning are in the extremely complex programs.

Programs no junior dev would be able to accomplish.

So if the post can misrepresent the issue, then the commenter can do so too.

GPT is ML

Nice, that saves the coffee.

But at what cost 😔

Ahh the future of dev. Having to compete with AI and LLMs, while also being forced to hastily build apps that use those things, until those things can build the app themselves.

Let's invent a thing inventor, said the thing inventor inventor after being invented by a thing inventor.

You could make a religion out of this.

And also, as a developer, you have to deal with the way Star Trek just isn't as good as it used to be.

Because you're all fucking nerds.

(Me too tho)

SNW has been thoroughly enjoyable so far.

I mean if you have access but are not using Copilot at work you're just slowing yourself down. It works extremely well for boilerplate/repetitive declarations.

I've been working with third party APIs recently and have written some wrappers around them. Generally by the 3rd method it's correctly autosuggesting the entire method given only a name, and I can point out mistakes in English or quickly fix them myself. It also makes working in languages I'm not familiar with way easier.

AI for assistance in programming is one of the most productive uses for it.

Oh I use Copilot daily. It fills the gaps for the repetitive stuff like you said. I was writing Stories in a Storybook.js project once and was able to make it auto-suggest the remainder of my entire component states after writing 2-3. They worked out of the gate too with maybe a single variable change. Initially, I wasn’t even going to do all of them in that coding session just to save time and get it handed off, but it was giving me such complete suggestions that I was able to build every single one out with interaction tests and everything.

Outside of use cases like that and getting very general content, I think AI is a mess. I’ve worked with ChatGPT’s v3.5-4 API a ton and it’s unpredictable and hard to instruct sometimes. Prompts and approaches that worked 2 weeks ago, will now suddenly give you some weird edge case that you just can’t get it to stop repeating—even when using approaches that worked flawlessly for others. It’s like trying to patch a boat while you’re in it.

The C suite people and suits jumped on AI way too early and have haphazardly forced it into every corner. It’s become a solution searching for a problem. The other day, a friend of mine said he had a client that casually asked how they were going to use AI on the website they were building for them, like it was just a commonplace thing. The buzzword has gotten ahead of itself and now we’re trying to reel it back down to earth.

Did you just post your open ai api key on the internet?

Nah, this is a meme post about using chatgpt to check even numbers instead of simple code.

Same joke as the OP, different format.

Let's put it here in ascii format this free OpenAI API Key, token, just for the sake of history and search engines healthiness... 😂

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

But seriously, I hope they have already changed it.

After a small test, it doesn't work.

Haha it looks that way doesn't it. Hopefully those are scoped and limited 😳

I can't wait for chatgpt sort

sort this d (gestures rudely at the concept of llms)

Thank you for your concern everyone I did not create this image

The sad thing is that no amount of mocking the current state of ML today will prevent it from taking all of our jobs tomorrow. Yes, there will be a phase where programmers, like myself, who refuse to use LLM as a tool to produce work faster will be pushed out by those that will work with LLMs. However, I console myself with the belief that this phase will last not even a full generation, and even those collaborative devs will find themselves made redundant, and we'll reach the same end without me having to eliminate the one enjoyable part of my job. I do not want to be reduced to being only a debugger for something else's code.

Thing is, at the point AI becomes self-improving, the last bastion of human-led development will fall.

I guess mocking and laughing now is about all we can do.

at the point AI becomes self-improving

This is not a foregone conclusion. Machines have mostly always been stronger and faster than humans, because humans are generally pretty weak and slow. Our strength is adaptability.

As anyone with a computer knows, if one tiny thing goes wrong it messes up everything. They are not adaptable to change. Most jobs require people to be adaptable to tiny changes in their routine every day. That's why you still can't replace accountants with spreadsheets, even though they've existed in some form for 50 years.

It's just a tool. If you don't want to use it, that's kinda weird. You aren't just "debugging" things. You use it as a junior developer who can do basic things.

This is not a foregone conclusion.

Sure, I agree. There's many a slip twixt the cup and the lip. However, I've seen no evidence that it won't happen, or that humans hold any inherent advantage over AI (as nascent as it may be, in the rude forms of LLMs and deep learning they're currently in).

If you want something to reflect upon, your statement about how humans have an advantage of adaptability sounds exactly like the previous generation of grasping at inherant human superiority that would be our salvation: creativity. It wasn't too long ago that people claimed that machines would never be able to compose a sonnet, or paint a "Starry Night," and yet, creativity has been one of the first walls to fall. And anyone claiming that ML only copies and doesn't produce anything original has obviously never studied the history of fine art.

Since noone would now claim that machines will never surpass humans in art, the goals have shifted to adaptability? This is an even easier hurdle. Computer hardware is evolving at speeds enormously faster than human hardware. With the exception of the few brief years at the start of our lives, computer software is more easily modified, updated, and improved than our poor connective neural networks. It isn't even a competition: conputers are vastly more well equipped to adapt faster than we are. As soon as adaptability becomes a priority of focus, they'll easily exceed us.

I do agree, there are a lot of ways this futur could not come to pass. Personally, I think it's most likely we'll extinct ourselves - or, at least, the society able to continue creating computers. However, we may hit hardware limits. Quantum computing could stall out. Or, we may find that the way we create AI cripples it the same way we are, with built-in biases, inefficiencies in thinking, or simply too high of resource demands for complexity much beyond what two humans can create with far less effort and very little motivation.

Well, we could end capitalism, and demand that AI be applied to the betterment of humanity, rather than to increasing profits, enter a post-scarcity future, and then do whatever we want with our lives, rather than selling our time by the hour.

The only way I see that happening is if the entire economy collapses because nobody has jobs, which might actually happen pretty soon 🤷

Amen. Let's do that thing: you have my vote.

The best part is that dumbass devs are actively working on self improving AI that will take their jobs.

Well, if training is included, then why it is not included for the developer? From his first days of his life?

The difference is that the dev paid for their training themselves

Sort of... If the dev didn't pay for their training, they wouldn't need as big of a wage to pay off their training debt (the usual scenario I'd wager).

So in a way the company is currently paying off the debt for the Devs training, most of the time.

The company OpenAI also paid for LLM training and then sell LLM to users.

When did the training happen? The LLM is trained for the task starting when the task is assigned. The developer's training has already completed, for this task at least.

No? The LLM was trained before you ever even interacted with it. They're not going to train a model on the fly each time you want to use it, that's fucking ridiculous.

I see no mention of Hitler nor abusive language, are you sure that's a real AI? /s :-P

To be fair the human had how many years of training more than the AI to be fit to even attempt to solve this problem.

And hundreds of thousands of years of evolution pre-training the base model that their experience was layered on top of.

Exactly, people don't seem to understand that our intelligence/problem solving ability is based on two major factors.

Without these a human is useless, we have training data as well, it's just organic and learned over a lifetime in addition to the billions of years of life evolving on this planet.

I don't know why, but "mechanical turk" keeps cropping up when I think about this sort of stuff.

I'm hoping even a junior dev has had more than 60 hours of training.

Yea, but does the AI ask me why “x” doesn’t work as a multiplication operator 14 times while complaining about how this would be easier in Rust?

But which consumes more energy? Like really. I'm betting AI does, but some tasks might be close.

the future unifying metric for productivity should be joules per line of code. If you cost more than a machine you get laid off

Time to config a formatter to insert a newline whenever the syntax is still correct

Machine learning also needs tons of carbon burnt on a local power plant in order to run

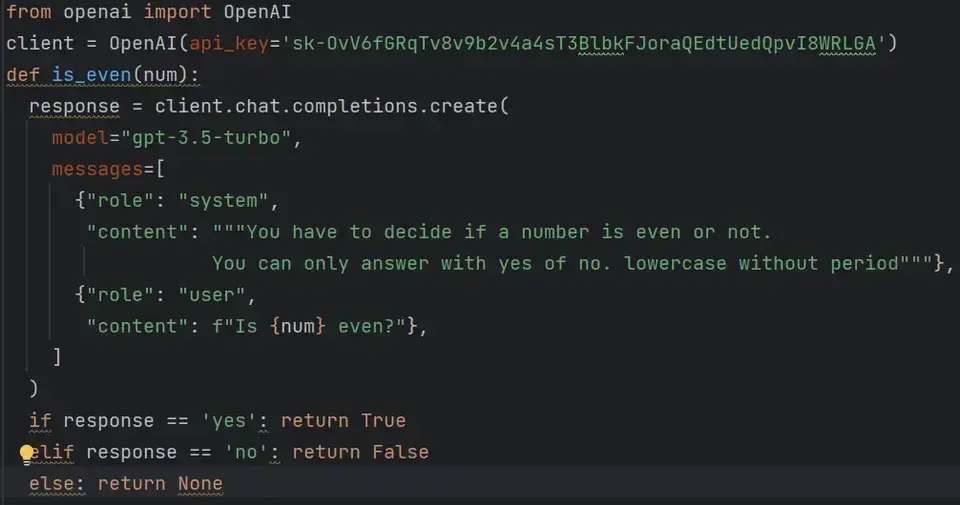

This is all funny and stuff but chatGPT knows how long the German Italian border is and I'm sure, most of you don't

Nobody knows how long any border is if it adheres to any natural boundaries. The only borders we know precisely are post-colonial perfectly straight ones.

Well, for non-adjacent countries, the answer is still straightforward

So I apparently have too much free time and wanted to check. So I asked ChatGPT how long the border was exactly, and it could only get an approximate guess, and it had to search using Bing to confirm.

Here I am wondering why no one made the joke that the answer was not found (404) but chat gpt assumed it was the answer 😂

Google's AI gives it as:

The length of the German-Italian border depends on how you define the border. Here are two ways to consider it:

Total land border: This includes the main border between the two countries, as well as the borders of enclaves and exclaves. This length is approximately 811 kilometers (504 miles).

Land border excluding exclaves and enclaves: This only considers the main border between the two countries, neglecting the complicated enclaves and exclaves within each country's territory. This length is approximately 756 kilometers (470 miles).

It's important to note that the presence of exclaves and enclaves creates some interesting situations where the border crosses back and forth within the same territory. Therefore, the definition of "border" can influence the total length reported.

That's a number I never got. I got either 700 something km or 1000 something. It's only sometimes that chatGPT realizes that there are Austria and Switzerland in between and there is no direct border

Make sure you ask the AI not to hallucinate because it will sometimes straight up lie. It’s also incapable of counting.

But where is it fun in it if I can't make it hallucinate?