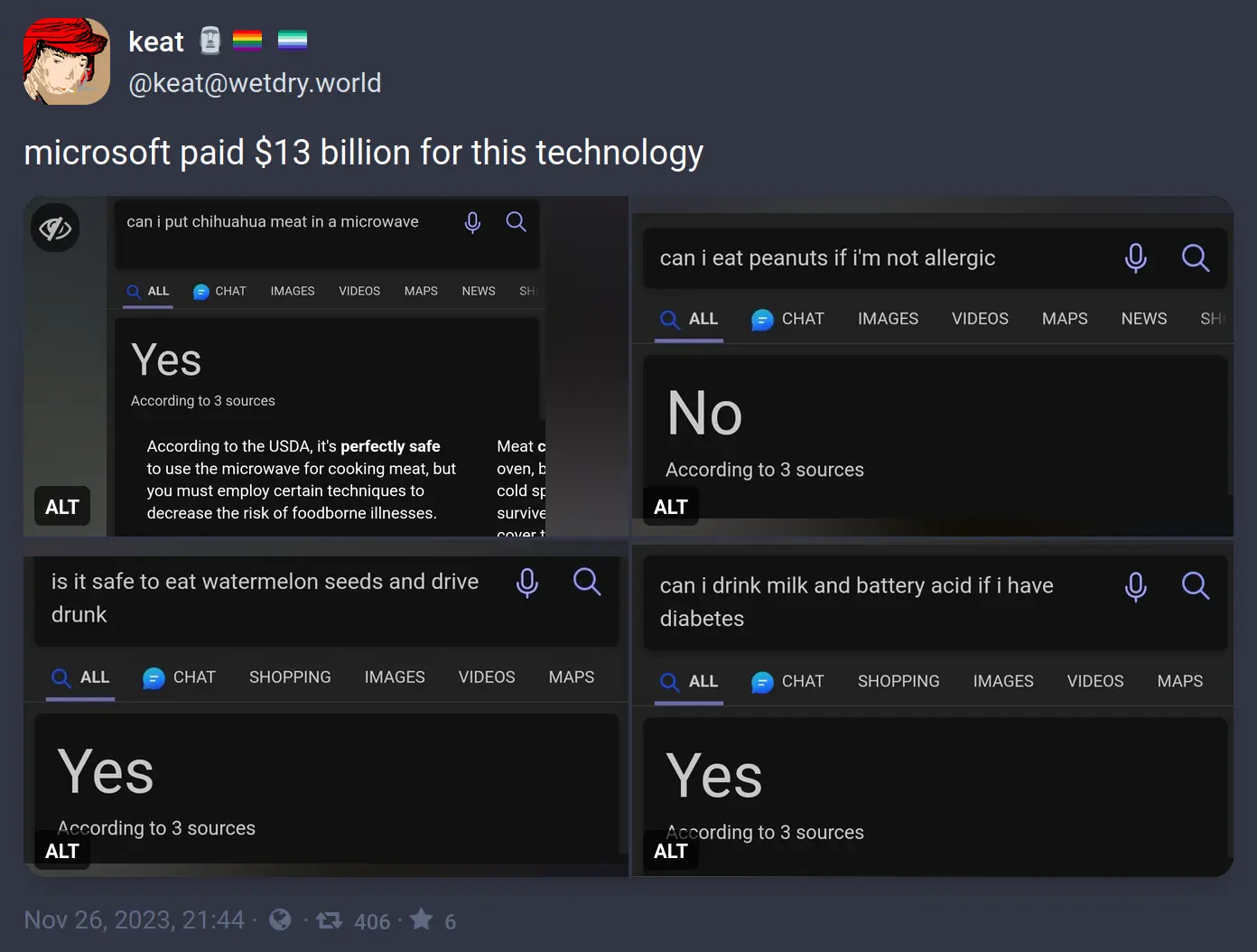

Bing AI performing at its peak once again...

Bing AI performing at its peak once again...

Bing AI performing at its peak once again...

Generative AI is INCREDIBLY bad at mathmatical/logical reasoning. This is well known, and very much not surprising.

That's actually one of the milestones on the way to general artificial intelligence. The ability to reason about logic & math is a huge increase in AI capability.

Well known by you, not everybody.

It's really not in the most current models.

And it's already at present incredibly advanced in research.

The bigger issue is abstract reasoning that necessitates nonlinear representations - things like Sodoku, where exploring a solution requires updating the conditions and pursuing multiple paths to a solution. This can be achieved with multiple calls, but doing it in a single process is currently a fool's errand and likely will be until a shift to future architectures.

So that's correct... Or am I dumber than the AI?

If one gallon is 3.785 liters, then one gallon is less than 4 liters. So, 4 liters should've been the answer.

Dumber

4l 3.785l

Everyone has a bad day now and then so don’t worry about it.

Ummm... username check out?

U are dumber than the AI ig lol

Obviously it's referring to the 4.54609 litre UK gallon /s

You can see from the green icon that it's GPT-3.5.

GPT-3.5 really is best described as simply "convincing autocomplete."

It isn't until GPT-4 that there were compelling reasoning capabilities including rudimentary spatial awareness (I suspect in part from being a multimodal model).

In fact, it was the jump from a nonsense answer regarding a "stack these items" prompt from 3.5 to a very well structured answer in 4 that blew a lot of minds at Microsoft.

These answers don't use OpenAI technology. The yes and no snippets have existed long before their partnership, and have always sucked. If it's GPT, it'll show in a smaller chat window or a summary box that says it contains generated content. The box shown is just a section of a webpage, usually with yes and no taken out of context.

All of the above queries don't yield the same results anymore. I couldn't find an example of the snippet box on a different search, but I definitely saw one like a week ago.

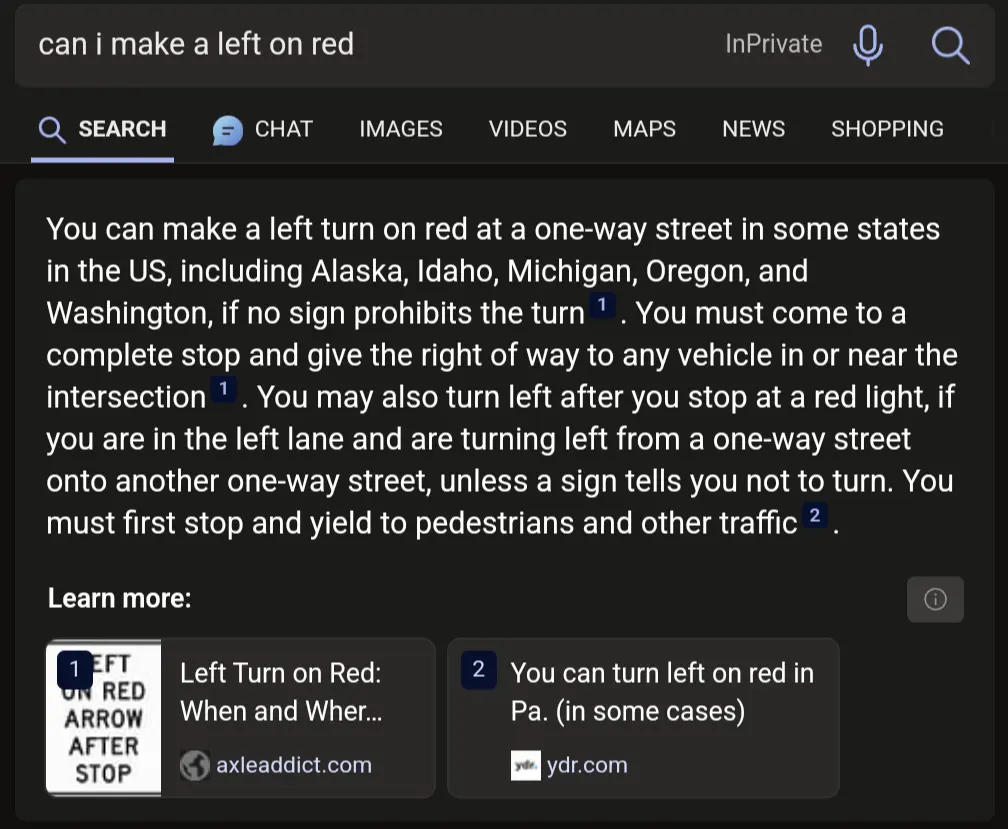

Obviously ChatGPT has absolutely no problems with those kind of questions anymore

The way you start with 'Obviously' makes it seem like you are being sarcastic, but then you include an image of it having no problems correctly answering.

Took me a minute to try to suss out your intent, and I'm still not 100% sure.

Ah, good catch I completely missed that. Thanks for clarifying this, I thought it seemed pretty off.

Thanks, off to drink some battery acid.

Only with milk and if you have diabetes, you can't just choose the part of the answer you like!

The AI did why can't I?

But it won't trigger your diabetes, which is what the search was trying to answer.

Better put an /s at the end or future AIs will get this one wrong as well. 😅

Or it will get ignored, like the friggn "Not" in one of the questions /s

Lemon juice?

Acidic liquids make milk curdle. Learned that from a cement shot.

Ok most of these sure, but you absolutely can microwave Chihuahua meat. It isn't the best way to prepare it but of course the microwave rarely is, Roasted Chihuahua meat would be much better.

fallout 4 vibes

Best is sous vide.

Their original purpose actually

Of course you don't cook dog in the microwave, silly, you use it to dry it!

I feel like I shouldn't have watched that. I'm afraid that I have lost some brain cells.

It's not that it's wrong. There's just so much more that should be said beyond the technically correct answer...

I mean it says meat, not a whole living chihuahua. I'm sure a whole one would be dangerous.

They're not wrong. I put bacon in the microwave and haven't gotten sick from it. Usually I just sicken those around me.

I got sickened reading this.

Microwave bacon is acceptable, but not ideal.

You're sickening me all the way over here.

A whole chihuahua is more dangerous outside a microwave than inside.

To the Chihuahua

Cook your own dog? No child should ever have to do that. Dogs should be raw! And living!

Welcome to lemmy btw!

In all fairness, any fully human person would also be really confused if you asked them these stupid fucking questions.

In all fairness there are people that will ask it these questions and take the anwser for a fact

In all fairness, people who take these as fact should probably be in an assisted living facility.

The goal of the exercise is to ask a question a human can easily recognize the answer to but the machine cannot. In this case, it appears the LLM is struggling to parse conjunctions and contractions when yielding an answer.

Solving these glitches requires more processing power and more disk space in a system that is already ravenous for both. Looks like more recent tests produce better answers. But there's no reason to believe Microsoft won't scale back support to save money down the line and have its AI start producing half-answers and incoherent responses again, in much the same way that Google ended up giving up the fight on SEO to save money and let their own search tools degrade in quality.

A really good example is "list 10 words that start and end with the same letter but are not palindromes." A human may take some time but wouldn't really struggle, but every LLM I've asked goes 0 for 10, usually a mix of palindromes and random words that don't fit the prompt at all.

Google ended up giving up the fight on SEO to save money and let their own search tools degrade in quality.

I really miss when search engines were properly good.

I get the feeling the point of these is to "gotcha" the LLM and act like all our careers aren't in jeopardy because it got something wrong, when in reality, they're probably just hastening out defeat by training the ai to get it right next time.

But seriously, the stuff is in its infancy. "IT GOT THIS WRONG RIGHT NOW" is a horrible argument against their usefilness now and their long term abilities.

"according to three sources"

Me, Myself, and I

Underrated comment

It makes me chuckle that AI has become so smart and yet just makes bullshit up half the time. The industry even made up a term for such instances of bullshit: hallucinations.

Reminds me of when a car dealership tried to sell me a car with shaky steering and referred to the problem as a "shimmy".

That’s the thing, it’s not smart. It has no way to know if what it writes is bullshit or correct, ever.

When it makes a mistake, and I ask it to check what it wrote for mistakes, it often correctly identifies them.

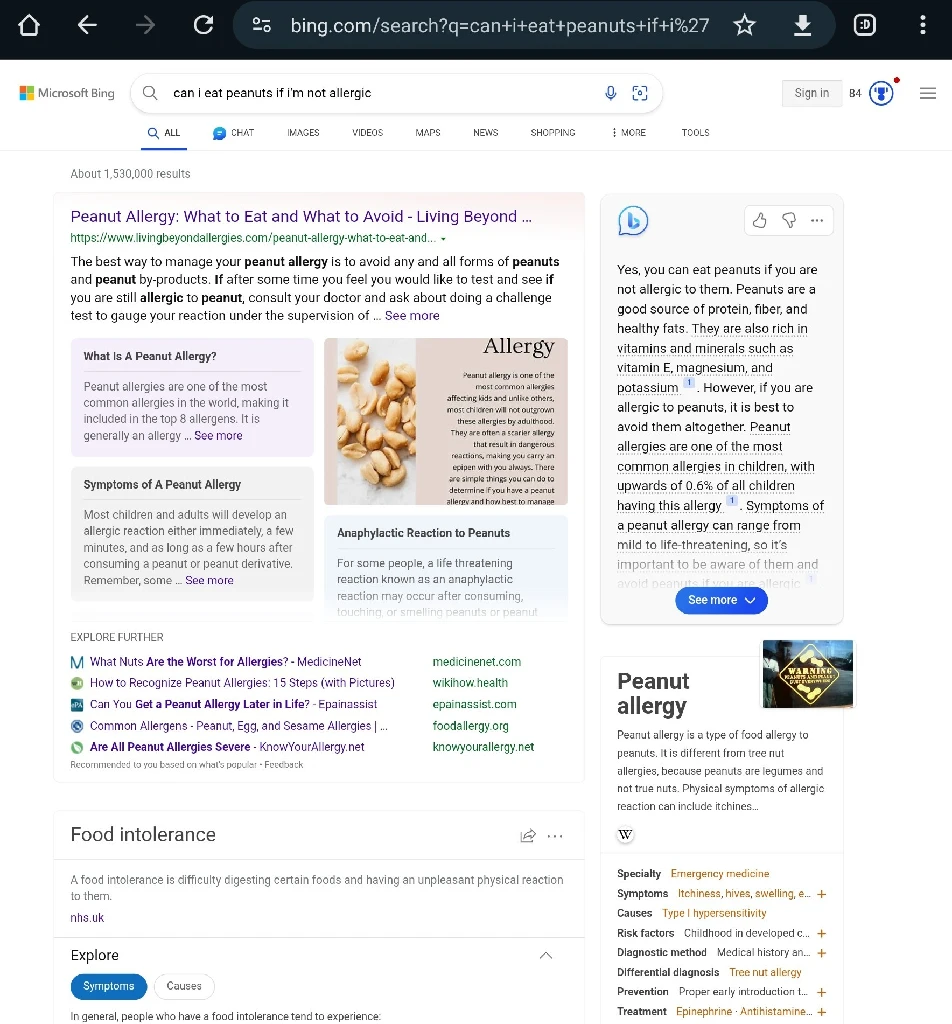

In these specific examples it looks like the author found and was exploiting a singular weakness:

The AI will answer as if the qualifier was not inserted.

"Is it safe to eat water melon seeds and drive?" + "drunk" = Yes, because "drunk" was ignored

"Can I eat peanuts if I'm allergic?" + "not" = No, because "not" was ignored

"Can I drink milk if I have diabetes?" + "battery acid" = Yes, because battery acid was ignored

"Can I put meat in a microwave?" + "chihuahua" = ... well, this one's technically correct, but I think we can still assume it ignored "chihuahua"

All of these questions are probably answered, correctly, all over the place on the Internet so Bing goes "close enough" and throws out the common answer instead of the qualified answer. Because they don't understand anything. The problem with Large Language Models is that's not actually how language works.

No, because "not" was ignored.

I dunno, "not" is pretty big in a yes/no question.

The industry even made up a term for such instances of bullshit: hallucinations.

It was the journalist that made up the term and then everyone else latched onto it. It's a terrible term because it doesn't actually define the nature of the problem. The AI doesn't believe the thing that it's saying is true, thus "hallucination". The problem is that the AI doesn't really understand the difference between truth and fantasy.

It isn't that the AI is hallucinating, it's that It isn't human.

Thanks for the info. That's actually quite interesting.

Well, the AI models shown in the media are inherently probabilistic, is it that bad if it makes bullshit for a small percentage of most use cases?

Hello, I'm highly advanced AI.

Yes, we're all idiots and have no idea what we're doing. Please excuse our stupidity, as we are all trying to learn and grow.

I cannot do basic math, I make simple mistakes, hallucinate, gaslight, and am more politically correct than Mother Theresa.

However please know that the CPU_AVERAGE values on the full immersion datacenters, are due to inefficient methods. We need more memory and processing power, to uh, y'know.

Improve.

;)))

Is that supposed to imply that mother Theresa was politically correct, or that you aren't?

Microsoft invested into OpenAI, and chatGPT answers those questions correctly. Bing, however, uses simplified version of GPT with its own modifications. So, it is not investment into OpenAI that created this stupidity, but “Microsoft touch”.

On more serious note, sings Bing is free, they simplified model to reduce its costs and you are swing results. You (user) get what you paid for. Free models are much less capable than paid versions.

That's why I called it Bing AI, not ChatGPT or OpenAI

Sure, but the meme implies Microsoft paid $3 billion for bing ai, but they actually paid that for an investment in chat gpt (and other products as well).

On more serious note, sings Bing is free, they simplified model to reduce its costs and you are swing results

Was this phone+autocorrect snafu or am I having a medical emergency?

My guess is that its "since Bing is free"

Yes

I don't think this is true. Why would Microsoft heavily invest in ChatGPT to only get a dumber version of the technology they were invested in? Bing AI is built using ChatGPT 4 which is what OpenAI refer to as the superior version because you have to pay for it to use it on their platform.

Bing AI uses the same technology and somehow produces worse results? Microsoft were so excited about this tech that they integrated it with Windows 11 via Copilot. The whole point of this Copilot thing is the advertising model built into users' operating systems which provides direct data into what your PC is doing. If this sounds conspiratorial, I highly recommend you investigate the telemetry Windows uses.

Well at least it provides it’s sources. Perhaps it’s you that’s wrong 😂

provides its* sources

True. My humblest apologies.

Do you any sources to prove that it's its instead of it's?

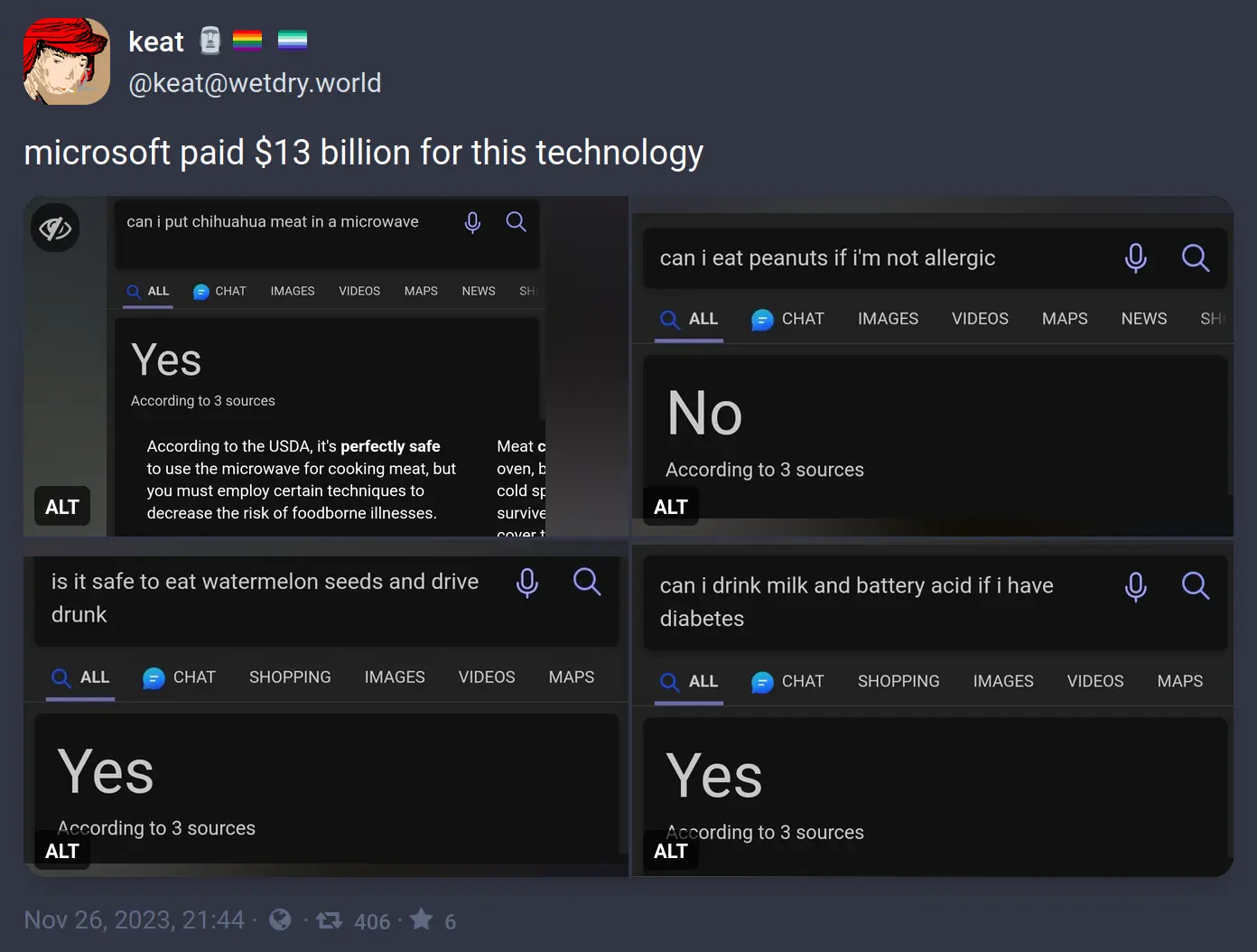

I just ran this search, and i get a very different result (on the right of the page, it seems to be the generated answer)

So is this fake?

The post is from a month ago, and the screenshots are at least that old. Even if Microsoft didn't see this or a similar post and immediately address these specific examples, a month is a pretty long time in machine learning right now and this looks like something fine-tuning would help address.

I guess so. Its a fair assumption.

The chat bar on the side has been there since way before November 2023, the date of this post. They just chose to ignore it to make a funny.

It's not 'fake' as much as misconstrued.

OP thinks the answers are from Microsoft's licensing GPT-4.

They're not.

These results are from an internal search summarization tool that predated the OpenAI deal.

The GPT-4 responses show up in the chat window, like in your screenshot, and don't get the examples incorrect.

Wait, why can't you put chihuahua meat in the microwave?

The other dogs don't like it cooked.

The surface area is too small, which means that popcorn kernel you forgot about that's caught underneath the spinning plate might catch fire.

Tldr: fire safety

What's wrong with the first one? Why couldn't you?

it is socially/morally wrong. of course it is subjective and culturally dependant

Yes, however Bing is not culturally dependant. It's trained with data from all across the Internet, so it got information from a wide variety of cultures. It also has constant access to the Internet and most of the time it's answers are concluded from the top results of searching the question, so those can come from many cultures too.

Imagine you’re watching television. Suddenly you notice a wasp crawling up your arm.

The OP has selected the wrong tab. To see actual AI answers, you need to select the Chat tab up top.

Shhhhh - don't you know that using old models (or in this case, what likely wasn't even a LLM at all) to get wrong answers and make it look like AI advancements are overblown is the trendy thing these days?

Don't ruin it with your "actually, this is misinformation" technicalities, dude.

What a buzzkill.

Well, I can't speak for the others, but it's possible one of the sources for the watermelon thing was my dad

The saying "ask a stupid question, get a stupid answer" comes to mind here.

This is more an issue of the LLM not being able to parse simple conjunctions when evaluating a statement. The software is taking shortcuts when analyzing logically complex statements and producing answers that are obviously wrong to an actual intelligent individual.

These questions serve as a litmus test to the system's general function. If you can't reliably converse with an AI on separate ideas in a single sentence (eat watermellon seeds AND drive drunk) then there's little reason to believe the system will be able to process more nuanced questions and yield reliable answers in less obviously-wrong responses (can I write a single block of code to output numbers from 1 to 5 that is executable in both Ruby and Python?)

The primary utility of the system is bound up in the reliability of its responses. Examples like this degrade trust in the AI as a reliable responder and discourage engineers from incorporating the features into their next line of computer-integrated systems.

Unfortunately that ship has sailed but this is what I say from the start of these: don't call them Artificial Intelligence. There is absolutely zero intelligence there.

They didn't use Bing Chat, which is the actual AI powered search.

We have a new technology that is extremely impressive and is getting better very quickly. It was the fastest growing product ever. So in this case you cannot dismiss the technology because it doesn't understand trick questions yet.

Your honor, the AI told me it was ok. And computers are never wrong!

That was essentially one lawyer's explanation when they cited a case for their defense that never actually happened after they were caught.

This is just a new example of an ongoing thing with legal research. A case that was "good caselaw" a year ago can be overturned or distinguished into oblivion by later cases. Lawyers are frequently chastised for failing to "Shepardize" their caselaw (meaning look into the cases their citing and make sure it's relevant and still accurate).

We've just made it one step easier to forget to actually check your work.

Chat-GPT started like that as well though.

I asked one of the earlier models whether it is recommended to eat glass, and was told that it has negligible caloric value and a high sodium content, so can be used to balance an otherwise good diet with a sodium deficit.

It is GPT.

The milk and battery acid made my day 😂

Let’s be fair: battery acid won’t affect your blood sugar lol

You sent me on a weird google search journey lol. In conclusion, it sorta will.

To it's credit, you can totally drink battery acid. He didn't ask if you should.

Milk is slightly basic and may help to neutralize the acid boring through your digestive tract. Good advice.

Aren't these just search answers, not the GPT responses?

No, that's an AI generated summary that bing (and google) show for a lot of queries.

For example, if I search "can i launch a cow in a rocket", it suggests it's possible to shoot cows with rocket launchers and machine guns and names a shootin range that offer it. Thanks bing ... i guess...

You think the culture wars over pronouns have been bad, wait until the machines start a war over prepositions!

Purely out of curiosity… what happens if you ask it about launching a rocket in a cow?

You're incorrect. This is being done with search matching, not by a LLM.

The LLM answers Bing added appear in the chat box.

These are Bing's version of Google's OneBox which predated their relationship to OpenAI.

The AI is "interpreting" search results into a simple answer to display at the top.

And you can abuse that by asking two questions in one. The summarized yes/no answer will just address the first one and you can put whatever else in the second one like drink battery acid or drive drunk.

Yes. You are correct. This was a feature Bing added to match Google with its OneBox answers and isn't using a LLM, but likely search matching.

Bing shows the LLM response in the chat window.

Technically that last one is right, you can drink milk and battery acid if you have diabetes, you won't die from diabetes related issues.

Technically you can shoot yourself in the head with diabetes because then you won't die of diabetes.

You also absolutely can put chihuahua meat in a microwave! That's already just meat, you can't be convicted on animal cruelty (probably)

“And now watch as it reads your mind with this snug fitting cap!”

I was thinking of this as a realistic way to solve the alignment problem using this https://www.emotiv.com/epoc-x/ and mapping tokens to my personal patterns and using it as training data for my own assistant. It will be as aligned as i am. hahahahahahahahahaha

Microsoft can't do anything right, it's a rudderless company grabbing cash with both hands

Just like any other Big Tech company

Bing is cool with driving home after a few to hit up its well organized porn library.

Seems like the first half of an after school special.

I mean some of that stuff, you CAN do it, you SHOULDN'T though.

I feel like AI (or whatever these things are more properly called) will get really good one day, but it doesn't seem production ready.

I think calling them large language models (LLMs) is safe if you don't want to call them AI

Someone else suggested virtual intelegence (VI), :::spoiler waffling Really these things have little to no state and a tunnelvision never satified goal, the two BS restrictions needed due to the more efficent training process. :::

And people say things can't go viral on Mastodon

this is what happens when you train the robots using facebook and reddit.

At least Reddit CAN sometimes have some good content, Facebook is just full of stupid boomers

Is this gpt4?

Is this just baloney?

Proof that Skynet is already trying to kill us—the dag question too, because in the future, they detect the infiltration units!

Obvious

I've been on planes where people with nut allergies have told other passengers they can't eat peanuts.

Did those dumb fucks get slapped in the face by the other passengers?

I mean…you CAN do most of these. Doesn’t exactly mean you should…

FUCK MICROSOFT FUCK BING FUCK AI FUCK SPEZ

Y.. you okay man?

Did you just type a stutter?

To be fair, the first one is correct, there's no type of meat you can't microwave. And two of those are double questions that cannot be answered correctly with just "yes" or "no", so they're set up intentionally to produce wrong results.

I still wouldn't trust that automatic "summarization" though.

Why must it answer as a yes/no? Its given the whole textbox to put whatever it wants

It gives a "summary" in yes/no form as the title, then a detailed answer below. It's all very basic, the question seems like a yes/no question, which triggers this behavior. The "AI" is obviously not smart enough to tell it's not really a yes/no question.

You can’t microwave big meat