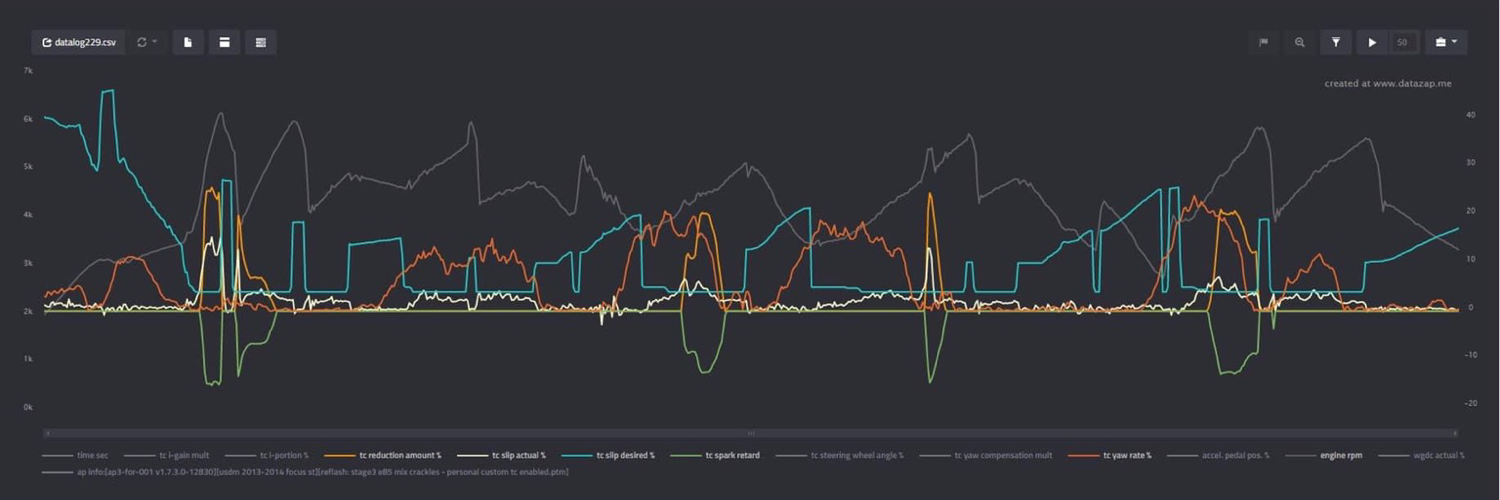

Web Dev Person / Ex Performance ECU Calibrations Person

MinimalChat Is a Full-Featured and Self-Contained LLM Chat Application

MinimalChat is a lightweight, open-source chat application that allows you to interact with various large language models. - fingerthief/minimal-chat

cross-posted from: https://infosec.pub/post/13676291

> I've been building MinimalChat for a while now, and based on the feedback I've received, it's in a pretty decent place for general use. I figured I'd share it here for anyone who might be interested! > > ### Quick Features Overview: > > * Mobile PWA Support: Install the site like a normal app on any device. > * Any OpenAI formatted API support: Works with LM Studio, OpenRouter, etc. > * Local Storage: All data is stored locally in the browser with minimal setup. Just enter a port and go in Docker. > * Experimental Conversational Mode (GPT Models for now) > * Basic File Upload and Storage Support: Files are stored locally in the browser. > * Vision Support with Maintained Context > * Regen/Edit Previous User Messages > * Swap Models Anytime: Maintain conversational context while switching models. > * Set/Save System Prompts: Set the system prompt. Prompts will also be saved to a list so they can be switched between easily. > > The idea is to make it essentially foolproof to deploy or set up while being generally full-featured and aesthetically pleasing. No additional databases or servers are needed, everything is contained and managed inside the web app itself locally. > > It's another chat client in a sea of clients but it is unique in its own ways in my opinion. Enjoy! Feedback is always appreciated! > > Self Hosting Wiki Section https://github.com/fingerthief/minimal-chat/wiki/Self-Hosting-With-Docker >

I thought sharing here might be a good idea as well, some might find it useful!

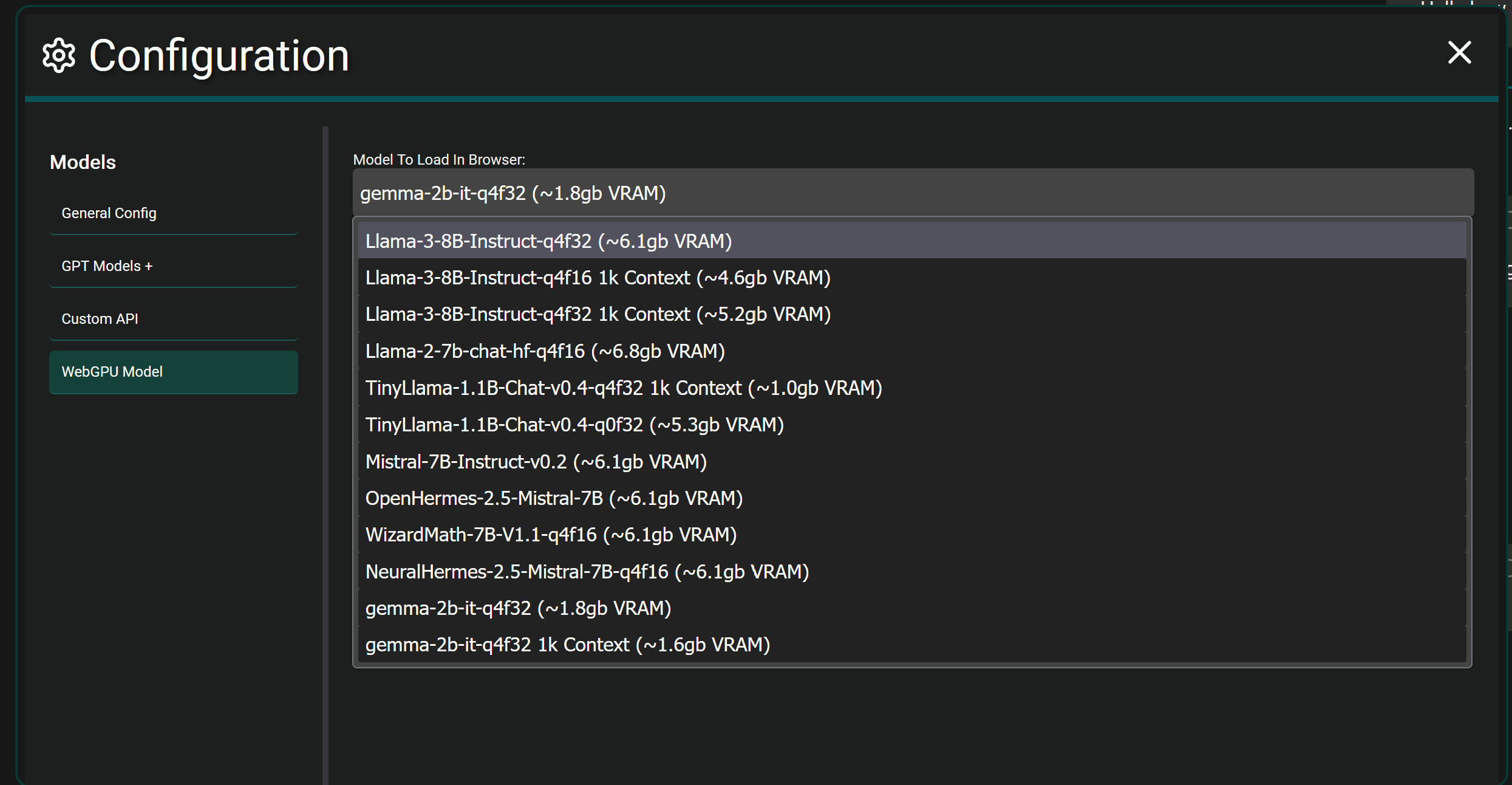

I've added some updates since even the initial post which gave a huge improvement to message rendering speed as well as added a plethora of new models to choose from and load/run fully locally in your browser (Edge and Chrome) with WebGPU and WebLLM

This project is entirely web based using Vue 3, it doesn't use langchain and I haven't looked into it before honestly but I do see they offer a JS library I could utilize. I'll definitely be looking into that!

As a result there is no LLM function calling currently and apps like LM Studio don't support function calling when hosting models locally from what I remember. It's definitely on my list to add the ability to retrieve outside data like searching the web and generating a response with the results etc..

Yep that's a pretty good comparison!

I'm curious on what you mean by sourcing training data in an ethical way? I know OpenAI has come under well deserved scrutiny for apparently using content that is hidden behind paywalls without purchasing it themselves in their training data. Which is quite unethical, but aside from that instance I'm interested in hearing some other concerns for my own education.

In general there are definitely loads of models on places like Hugging Face that are fully open source and provide training data sources for many.

I believe for Microsoft's new Phi 3 models they actually generated synthetic data themselves for training as well which is an interesting approach that seems to yield good results.

In the open source LLM world the new Meta Llama 3 models are the latest and greatest, I haven't seen any cause for concerns with it yet. Might be worth looking into those!

I haven't personally tried it yet with Ollama but it should work since it looks like Ollama has the ability to use OpenAI Response Formatted API https://github.com/ollama/ollama/blob/main/docs/openai.md

I might give it go here in a bit to test and confirm.

Local models are indeed already supported! In fact any API (local or otherwise) that uses the OpenAI response format (which is the standard) will work.

So you can use something like LM Studio to host a model locally and connect to it via the local API it spins up.

If you want to get crazy...fully local browser models are also supported in Chrome and Edge currently. It will download the selected model fully and load it into the WebGPU of your browser and let you chat. It's more experimental and takes actual hardware power since you're fully hosting a model in your browser itself. As seen below.

This app is more of an interface to use while connecting to any number of LLM Models that have an API available. The application itself has no model.

For example you can choose to use GPT-4 Omni by providing an API key from OpenAI.

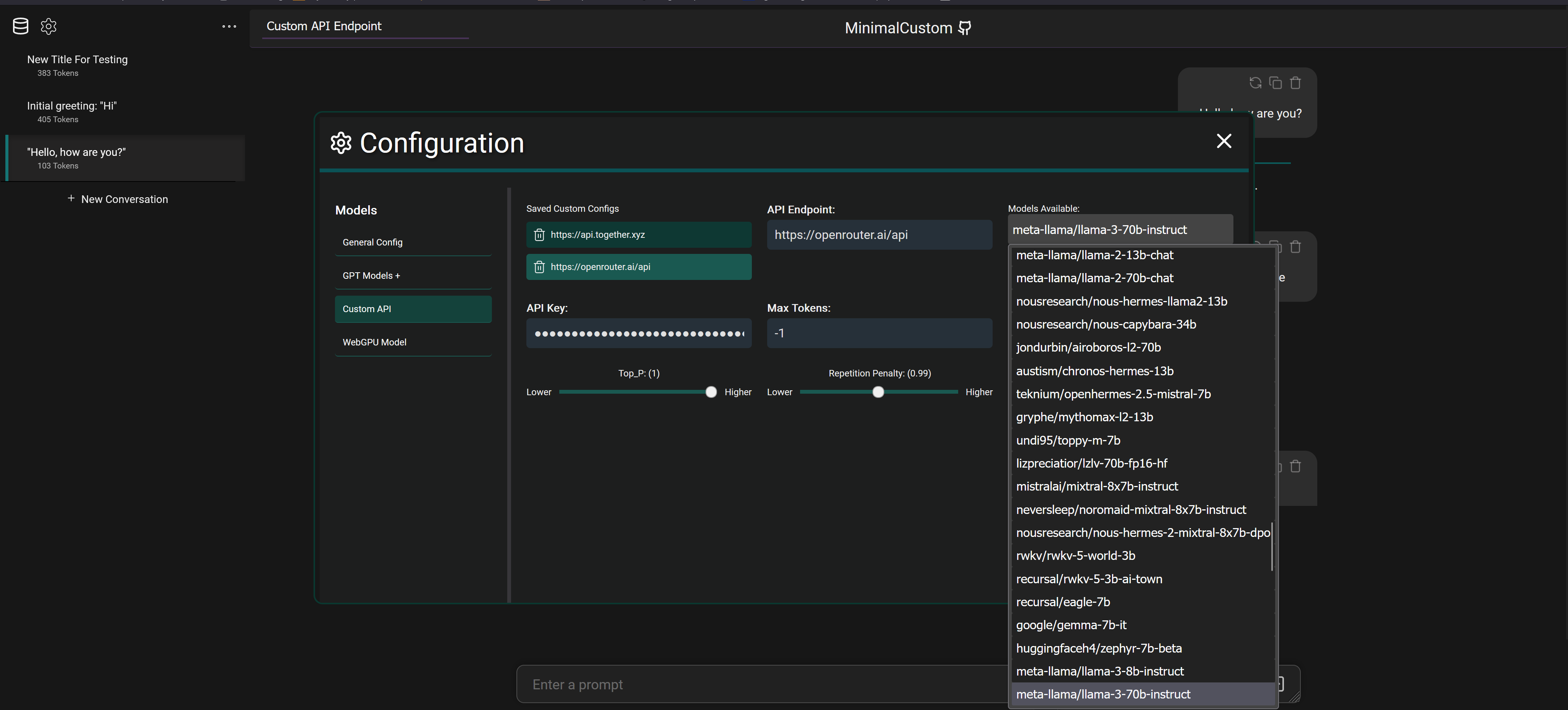

But you can also connect to services like OpenRouter with an API key and select between 20+ different models that they provide access to as seen below

It also supports connecting to fully local models via programs like LM Studio which downloads models from Hugging Face to your machine and will spin up a local API to connect and chat with the model.

MinimalChat Is a Full-Featured and Self-Contained LLM Chat Application

MinimalChat is a lightweight, open-source chat application that allows you to interact with various large language models. - fingerthief/minimal-chat

I've been building MinimalChat for a while now, and based on the feedback I've received, it's in a pretty decent place for general use. I figured I'd share it here for anyone who might be interested!

Quick Features Overview:

- Mobile PWA Support: Install the site like a normal app on any device.

- Any OpenAI formatted API support: Works with LM Studio, OpenRouter, etc.

- Local Storage: All data is stored locally in the browser with minimal setup. Just enter a port and go in Docker.

- Experimental Conversational Mode (GPT Models for now)

- Basic File Upload and Storage Support: Files are stored locally in the browser.

- Vision Support with Maintained Context

- Regen/Edit Previous User Messages

- Swap Models Anytime: Maintain conversational context while switching models.

- Set/Save System Prompts: Set the system prompt. Prompts will also be saved to a list so they can be switched between easily.

The idea is to make it essentially foolproof to deploy or set up while being generally full-featured and aesthetically pleasing. No additional databases or servers are needed, everything is contained and managed inside the web app itself locally.

It's another chat client in a sea of clients but it is unique in its own ways in my opinion. Enjoy! Feedback is always appreciated!

Self Hosting Wiki Section https://github.com/fingerthief/minimal-chat/wiki/Self-Hosting-With-Docker

Interesting, thanks for the info!

I wasn't aware of the update process being used as an attack vector (if it's still a thing) gonna have to read up more on that.

I used Apple for the last few years until recently and I can't say I've ever really noticed stuff like apps faking being another app. That's not to say it doesn't happen of course.

I do know the Apple app approval process is definitely more strict than what is required for the Play Store.

I'm not very experienced with Apple or Android development so I'd be curious to hear from devs that use both platforms as well.

I disliked signal app wise, and Matrix app was a buggy mess for me and the 4 other people who tried to use it as well

SimpleX was easy to setup and has been for the most part stable for all of us.

Basically to answer your question, people like different things.

SimpleX isn’t perfect by any means but it seems to be developed at a somewhat decent pace with noticeable improvements being made.

As a dev it’s nice to check all the official guideline boxes, as a user I’d much rather actually have features.

I feel like ChatGPT itself probably has a fairly loose temp setting (just a hunch) and I tend to set my conversations up to be more on the strict side

I imagine that’s why our results differ, it’s strange OpenAI doesn’t let ChatGPT site users or at least premium users adjust anything really yet.

Look at the first question in the my first screenshot. It gets that question correct for “mayonnaise” lol

But it’s able to correct unlike what’s shown in the OP messages.

Extremely semantically it seems but it clearly listens. It's neat to see how different each person experience is.

Also different tuning parameters etc..could make outputs different. That might explain why mine is seemingly a bit better at listening.

Now it’s broken, I guess I I don’t use it this way often enough. Interesting nonetheless!

Edit - it’s very semantic, it matters if I include an uppercase “S” or not. That’s amusing.

I wonder if the temperature settings adjustment would fix that or just make it even weirder.

Idk what I’m doing wrong, thankfully it always seems to listen and work fine for me lmao

You’ve never actually used them properly then.