This is silly.

The article is an anecdote about one incompetent user using a new tool; ChatGPT.

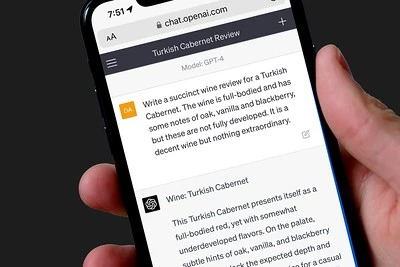

He uses the wrong tool for what he's trying to accomplish, finding sources. The free version of ChatGPT cannot search the internet and has no internal fact memory as he seems to wrongly assume.

So he, like many others, runs into hallucinations.

Then he jumps to conclusions:

- Our jobs are safe

- Chat GPT doesn’t make mistakes or tell falsehoods – it just gets confused

- He was going to have produce the substance of his keynote address the old fashioned way

How much weight does this assessment or article have?

People who better understand what they can expect from a LLM, and who are willing to invest a tad more time into learning how to use a new tool well, will of course produce better results.

If you want a LLM which can find sources, use a LLM which can find sources. Use the paid ChatGPT 4.0, Bing AI or perplexity.ai.

Like all tools which are used well, they become a productivity multiplier, which naturally means less workforce is required to do the same work. If your job involves text, and you refuse to learn how to use state of the art tools, your job is probably not that safe. Yes, maybe "for the next week or so", but AI development did not stop, so what does that help. You're not going to be replaced by AI, but by people who learned how to work with AI.

Here's a paper on the topic, which comes to vastly different conclusions than this anecdotal opinion piece: GPTs are GPTs: An Early Look at the Labor Market Impact

Potential of Large Language Models

You can upload it to https://www.chatpdf.com/ to get summaries or ask questions.