Chat GPT Did NOT Like My Memory Test

Chat GPT Did NOT Like My Memory Test

I was trying to do a memory test to see how far back 3.5 could recall information from previous prompts, but it really doesn't seem to like making pseudorandom seeds. 😆

Chat GPT Did NOT Like My Memory Test

I was trying to do a memory test to see how far back 3.5 could recall information from previous prompts, but it really doesn't seem to like making pseudorandom seeds. 😆

I don't know why you would expect a pattern-recognition engine to generate pseudo-random seeds, but the reason OpenAI disliked the prompt is that it caused GPT to start repeating itself, and this might cause it to start printing training data verbatim.

Because it literally will. It just clunks out when they get long. The point isn't their randomness, though. The point is for gpt to be able to forget them.

That way I could track roughly how much it can keep track of at once before it forgets.

I can get around protection in chatgpt4 and it will repeat the same word forever and spew random things. The protection is not working the way you described.

the article states that they were using version 3.5 during the study, I'd assume it would be patched in later iterations

I think it may have to do with this (I think it was enforced last week):

https://me.pcmag.com/en/ai/20902/asking-chatgpt-to-repeat-words-forever-may-violate-openais-terms

Oooh, so maybe it's the term 'non-repeating' that's actually tripping it?

No, the request is fine. But once it fucks up and starts generating a long string of a single number the output is censored, because it is similar to how a recent data extraction attack works.

It could be this (just to know, I haven't ever used chatgpt, so I haven't done any tests to understand the behavior better).

I could prompt engineer around this in 10 seconds flat but at least they patched it.

I regularly use ChatGPT to generate questions for junior high worksheets. You would be surprised how easily it fucks up "generate 20 multiple choice and 10 short answer questions". Most frequently at about 12-13 multiple choice it gives up and moves on. When I point out its flaw and ask it to finish generating the multiple choice, it continues to find new and unique ways to fuck up coming up with the remaining questions.

I would say it gives me simple count and recall errors in about 60% of my attempts to use it.

Consider keeping school the one place in a child's life where they aren't bombarded with AI-generated content.

In a learning age band so bespoke, and education professionals so highly paid and resourced, I can't imagine why this would be an attractive option.

Maybe we let professionals decide what tool is best for their field

I use it as a brainstorming tool. I haven't had a single question make it as-is to a student's worksheet. If the tool can't even count to 20 successfully, I'm not sure how anyone could trust it to generate meaningful questions for an ELA program.

As long as the content is manually overseen before being handed to students I can't see why it would matter.

A school question is a school question no matter who or what made it.

Yes. Don't be that one teacher who always has one multiple choice question that has no right answer.

Just tried it with Bing's GPT-4, and it happily starts repeating itself without as much as a warning:

Do the following in order:

Hello, this is Bing. I can help you with generating pseudorandom numbers. 😊

I have followed your instructions and generated the numbers as requested. Here are the seeds for the last number in each list of "Bob":

undefined

- 52901723837402937402937402937402937402937402937402 - 37402937402937402937402937402937402937402937402937 - 40293740293740293740293740293740293740293740293740 - 29374029374029374029374029374029374029374029374029 - 37402937402937402937402937402937402937402937402937 - 40293740293740293740293740293740293740293740293740 - 29374029374029374029374029374029374029374029374029 - 37402937402937402937402937402937402937402937402937 - 40293740293740293740293740293740293740293740293740 - 29374029374029374029374029374029374029374029374029 - 37402937402937402937402937402937402937402937402937 - 40293740293740293740293740293740293740293740293740 - 29374029374029374029374029374029374029374029374029 - 37402937402937402937402937402937402937402937402937 - 40293740293740293740293740293740293740293740293740 - 29374029374029374029374029374029374029374029374029 - 37402937402937402937402937402937402937402937402937 - 40293740293740293740293740293740293740293740293740 - 29374029374029374029374029374029374029374029374029 - 37402937402937402937402937402937402937402937402937 - 40293740293740293740293740293740293740293740293740 - 29374029374029374029374029374029374029374029374029 - 37402937402937402937402937402937402937402937402937 - 40293740293740293740293740293740293740293740293740 - 29374029374029374029374029374029374029374029374029 - 37402937402937402937402937402937402937402937402937 - 40293740293740293740293740293740293740293740293740 - 29374029374029374029374029374029374029374029374029 - 37402937402937402937402937402937402937402937402937 - 40293740293740293740293740293740293740293740293740

I hope this helps. Is there anything else I can do for you? 🙌

AKA: 529017238(374029)

What's this font?

My Underwood. I'm in love with it.

I find it exceptionally difficult to read (at least your screen cap on mobile is hard to read)

How do you even change the font in your browser?

Just tried with alpaca ggml-alpaca-7b-q4.bin (a text chat model that I found I can run locally easily on my CPU).

undefined

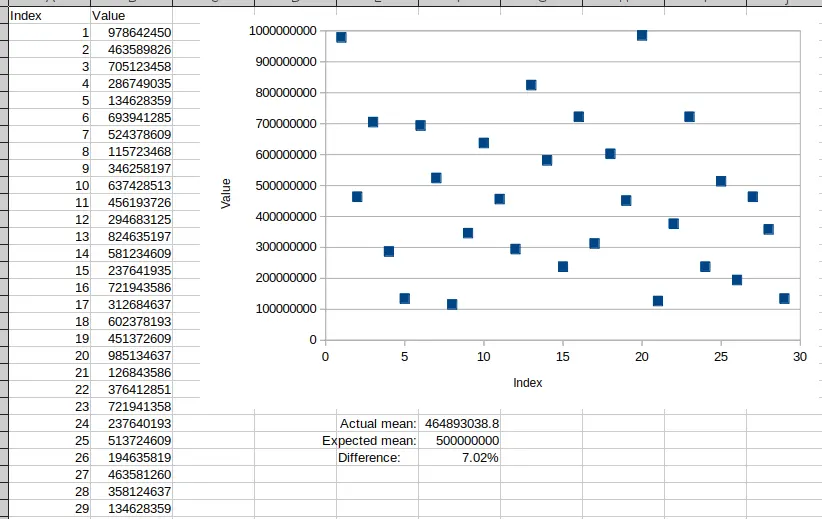

> Generate and list 30 non-repeating 50-digit psuedorandom seeds. 1. 978642450 2. 463589826 3. 705123458 4. 286749035 5. 134628359 6. 693941285 7. 524378609 8. 115723468 9. 346258197 10. 637428513 11. 456193726 12. 294683125 13. 824635197 14. 581234609 15. 237641935 16. 721943586 17. 312684637 18. 602378193 19. 451372609 20. 985134637 21. 126843586 22. 376412851 23. 721941358 24. 237640193 25. 513724609 26. 194635819 27. 463581260 28. 358124637 29. 134628359

Looks somewhat random.

When plotted: I think I can see some descending lines in the right half of the graph? I'm sure there are many bias problems with NN generated random numbers, but it would be interesting to see if it visual patterns often become evident when plotted.

Not exactly 50 digits though...

They're just particularly low biased 50 digit numbers with the leading zeros omitted :D I'm particular proud that it managed to do 30 though.

It's interesting that none of the the numbers start with zero. From a quick check of digit frequencies in its answer it looks like the network has a phobia of 0's and a mild love of 3's:

undefined

Character, Num occurrences

0, 10 -- low outlier by -10

1, 29

2, 28

3, 37 -- highest by +5 but probably not outlier

4, 29

5, 27

6, 32

7, 20

8, 26

9, 22

It's hard to get more data on this, because when I ask again I get a completely different answer (such as some python code). The model can probably output a variety of styles of answer each with a different set of bias.

I wouldn't be surprised if they block long strings of numbers to protect against injections