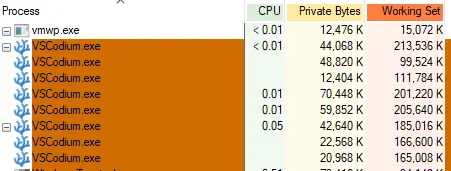

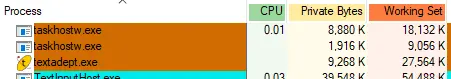

Sloppiness is rarely contained in one narrow area. If programmers are so sloppy they're using up 1.5-2GB to do literally nothing, then they're sloppy in how they do pretty much everything.

My very first computer had all my friends exceedingly jealous. I had 208KB of RAM see. (No, that's not a typo. KB.) I could run four simultaneous users, each user having 48KB of RAM with a staggering 16KB available for the operating system. I had available spreadsheet and word processing applications, as well as, naturally, development applications. (I also had a secret weapon that drove my friends crazy: while they were swapping around their floppy disks holding 160KB of data each, I had two of those … and I had a 5MB hard disk!

I compare those days to now and I have to laugh. My current computer (which is considered pretty weak by modern standards since I don't game so I don't give a fuck about GPUs and ten billion cores and clock rates in the Petahertz range) is almost four orders of magnitude faster than that first computer at the CPU level. I have six orders of magnitude more disk space and the disks are at least 3 orders of magnitude faster (possibly more: I don't have the stats for the old drive on hand.) I have five orders of magnitude more memory and it, again, is probably 3 orders of magnitude or more faster.

Yet …

I don't feel anywhere from 3 to 6 orders of magnitude more productive. Indeed I'd be surprised if I went about an order of magnitude better in terms of productivity with this (barely) modern machine than I had with my first machine. Things look prettier (by far!) I'll admit that, but in terms of actually getting anything done, these thousands, to millions of times more resources are basically 100% wasted. And they're wasted precisely because every time we increase our ability to do something in a computer by a factor of 10, sloppy- and lazy-assed programmers increase the resources they use to get things done by a factor of 11.

And it shows.

That old computer? Slow as it was, it booted from nothing to full, multi-user functionality in 10 seconds. 10 seconds after turning the power on, I could have up to four users running their software (or in my case one user with four screens) without a care in the world. Loading the word processor or spreadsheet was practically instantaneous. One, maybe two seconds. It didn't register as a wait. I just timed loading LibreOffice Writer on this modern system that runs four orders of magnitude faster. Six seconds, give or take. Which not even a slow-loading program! (Web browsers take significantly longer….)

And you know what problem I never once faced on that old computer running under a ten-thousandth (!) the speed? Typing faster than the system could keep up while displaying text. Yet as I type this I'm consistently typing one or two characters faster than the web browser can update plain text in a box. More than a ten thousand times faster!

So yes, yes it matters. When a system made likely before you were even born is running circles around a modern system in key pieces of functionality (albeit looking far, far, far prettier while doing it!), there's something that's going horribly wrong in software. And shit like VS Code is almost the Platonic Form of what's wrong with software today: bloated, slow, and so overpacked with features it has no elegance in functionality or implementation.

But hey, if you want to waste 2GB of RAM to load a 12KB text file, more power to you! I'll stick with a system that only wastes 30MB of RAM to do the same, and runs faster, and looks cleaner, and is easier to extend. (And since its total code, including the C code and the Lua extensions, is something you can peruse and understand out of the box in a weekend, it's also less buggy than VS Code: that's the other cost of bloat, after all. Bugs.)