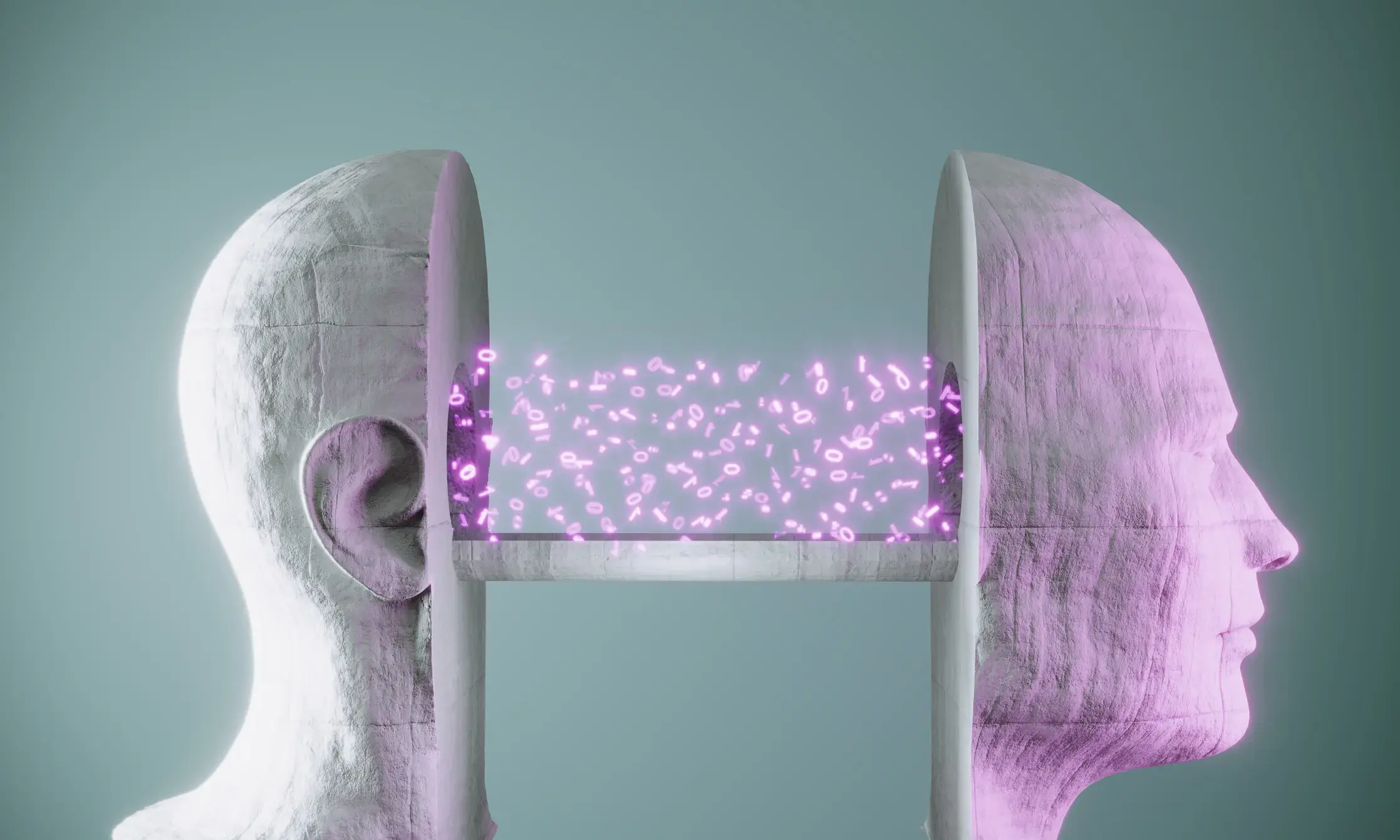

Apple study exposes deep cracks in LLMs’ “reasoning” capabilities

Apple study exposes deep cracks in LLMs’ “reasoning” capabilities

Irrelevant red herrings lead to “catastrophic” failure of logical inference.

Apple study exposes deep cracks in LLMs’ “reasoning” capabilities

Irrelevant red herrings lead to “catastrophic” failure of logical inference.

You're viewing a single thread.

One time I exposed deep cracks in my calculator's ability to write words with upside down numbers. I only ever managed to write BOOBS and hELLhOLE.

LLMs aren't reasoning. They can do some stuff okay, but they aren't thinking. Maybe if you had hundreds of them with unique training data all voting on proposals you could get something along the lines of a kind of recognition, but at that point you might as well just simulate cortical columns and try to do Jeff Hawkins' idea.

LLMs aren't reasoning. They can do some stuff okay, but they aren't thinking

and the more people realize it, the better. which is why it's good that a research like that from a reputable company makes headlines.

What about boobless?

Maggie's boobs weighed [69] pounds which was [2], [2], [2] much, so she went down [51]st street to see Dr. [X}; after an [8] hour operation she was [flip calculator]

Is it sad that I still remember this calculator joke verbatim from middle school?

Wow, I'm impressed