Breast Cancer

Breast Cancer

Breast Cancer

Why do I still have to work my boring job while AI gets to create art and look at boobs?

Because life is suffering and machines dream of electric sheeps.

I’ve seen things you people wouldn’t believe.

I dream of boobs.

Now make mammograms not $500 and not have a 6 month waiting time and make them available for women under 40. Then this'll be a useful breakthrough

It's already this way in most of the world.

Oh for sure. I only meant in the US where MIT is located. But it's already a useful breakthrough for everyone in civilized countries

Better yet, give us something better to do about the cancer than slash, burn, poison. Something that's less traumatic on the rest of the person, especially in light of the possibility of false positives.

Also, flying cars and the quadrature of the circle.

I think it’s free in most of Europe, or relatively cheap

Done.

Unfortunately AI models like this one often never make it to the clinic. The model could be impressive enough to identify 100% of cases that will develop breast cancer. However if it has a false positive rate of say 5% it’s use may actually create more harm than it intends to prevent.

Another big thing to note, we recently had a different but VERY similar headline about finding typhoid early and was able to point it out more accurately than doctors could.

But when they examined the AI to see what it was doing, it turns out that it was weighing the specs of the machine being used to do the scan... An older machine means the area was likely poorer and therefore more likely to have typhoid. The AI wasn't pointing out if someone had Typhoid it was just telling you if they were in a rich area or not.

That's actually really smart. But that info wasn't given to doctors examining the scan, so it's not a fair comparison. It's a valid diagnostic technique to focus on the particular problems in the local area.

"When you hear hoofbeats, think horses not zebras" (outside of Africa)

That is quite a statement that it still had a better detection rate than doctors.

What is more important, save life or not offend people?

That's why these systems should never be used as the sole decision makers, but instead work as a tool to help the professionals make better decisions.

Keep the human in the loop!

Not at all, in this case.

A false positive of even 50% can mean telling the patient "they are at a higher risk of developing breast cancer and should get screened every 6 months instead of every year for the next 5 years".

Keep in mind that women have about a 12% chance of getting breast cancer at some point in their lives. During the highest risk years its a 2 percent chamce per year, so a machine with a 50% false positive for a 5 year prediction would still only be telling like 15% of women to be screened more often.

Breast imaging already relys on a high false positive rate. False positives are way better than false negatives in this case.

That’s just not generally true. Mammograms are usually only recommended to women over 40. That’s because the rates of breast cancer in women under 40 are low enough that testing them would cause more harm than good thanks in part to the problem of false positives.

How would a false positive create more harm? Isn't it better to cast a wide net and detect more possible cases? Then false negatives are the ones that worry me the most.

It’s a common problem in diagnostics and it’s why mammograms aren’t recommended to women under 40.

Let’s say you have 10,000 patients. 10 have cancer or a precancerous lesion. Your test may be able to identify all 10 of those patients. However, if it has a false positive rate of 5% that’s around 500 patients who will now get biopsies and potentially surgery that they don’t actually need. Those follow up procedures carry their own risks and harms for those 500 patients. In total, that harm may outweigh the benefit of an earlier diagnosis in those 10 patients who have cancer.

Well it'd certainly benefit the medical industry. They'd be saddling tons of patients with surgeries, chemotherapy, mastectomy, and other treatments, "because doctor-GPT said so."

But imagine being a patient getting physically and emotionally altered, plunged into irrecoverable debt, distressing your family, and it all being a whoopsy by some black-box software.

The most beneficial application of AI like this is to reverse-engineer the neural network to figure out how the AI works. In this way we may discover a new technique or procedure, or we might find out the AI's methods are bullshit. Under no circumstance should we accept a "black box" explanation.

good luck reverse-engineering millions if not billions of seemingly random floating point numbers. It's like visualizing a graph in your mind by reading an array of numbers, except in this case the graph has as many dimensions as the neural network has inputs, which is the number of pixels the input image has.

Under no circumstance should we accept a "black box" explanation.

Go learn at least basic principles of neural networks, because this your sentence alone makes me want to slap you.

Don't worry, researchers will just get an AI to interpret all those floating point numbers and come up with a human-readable explanation! What could go wrong? /s

Hey look, this took me like 5 minutes to find.

Censius guide to AI interpretability tools

Here's a good thing to wonder: if you don't know how you're black box model works, how do you know it isn't racist?

Here's what looks like a university paper on interpretability tools:

As a practical example, new regulations by the European Union proposed that individuals affected by algorithmic decisions have a right to an explanation. To allow this, algorithmic decisions must be explainable, contestable, and modifiable in the case that they are incorrect.

Oh yeah. I forgot about that. I hope your model is understandable enough that it doesn't get you in trouble with the EU.

Oh look, here you can actually see one particular interpretability tool being used to interpret one particular model. Funny that, people actually caring what their models are using to make decisions.

Look, maybe you were having a bad day, or maybe slapping people is literally your favorite thing to do, who am I to take away mankind's finer pleasures, but this attitude of yours is profoundly stupid. It's weak. You don't want to know? It doesn't make you curious? Why are you comfortable not knowing things? That's not how science is propelled forward.

iirc it recently turned out that the whole black box thing was actually a bullshit excuse to evade liability, at least for certain kinds of model.

Well in theory you can explain how the model comes to it's conclusion. However I guess that 0.1% of the "AI Engineers" are actually capable of that. And those costs probably 100k per month.

Link?

It depends on the algorithms used. Now the lazy approach is to just throw neural networks at everything and waste immense computation ressources. Of course you then get results that are difficult to interpret. There is much more efficient algorithms that are working well to solve many problems and give you interpretable decisions.

IMO, the "black box" thing is basically ML developers hand waiving and saying "it's magic" because they know it will take way too long to explain all the underlying concepts in order to even start to explain how it works.

I have a very crude understanding of the technology. I'm not a developer, I work in IT support. I have several friends that I've spoken to about it, some of whom have made fairly rudimentary machine learning algorithms and neural nets. They understand it, and they've explained a few of the concepts to me, and I'd be lying if I said that none of it went over my head. I've done programming and development, I'm senior in my role, and I have a lifetime of technology experience and education... And it goes over my head. What hope does anyone else have? If you're not a developer or someone ML-focused, yeah, it's basically magic.

I won't try to explain. I couldn't possibly recall enough about what has been said to me, to correctly explain anything at this point.

The AI developers understand how AI works, but that does not mean that they understand the thing that the AI is trained to detect.

For instance, the cutting edge in protein folding (at least as of a few years ago) is Google's AlphaFold. I'm sure the AI researchers behind AlphaFold understand AI and how it works. And I am sure that they have an above average understanding of molecular biology. However, they do not understand protein folding better than the physisits and chemists who have spent their lives studying the field. The core of their understanding is "the answer is somewhere in this dataset. All we need to do is figure out how to through ungoddly amounts of compute at it, and we can make predictions". Working out how to productivly throw that much compute power at a problem is not easy either, and that is what ML researchers understand and are experts in.

In the same way, the researchers here understand how to go from a large dataset of breast images to cancer predictions, but that does not mean they have any understanding of cancer. And certainly not a better understanding than the researchers who have spent their lives studying it.

An open problem in ML research is how to take the billions of parameters that define an ML model and extract useful information that can provide insights to help human experts understand the system (both in general, and in understanding the reasoning for a specific classification). Progress has been made here as well, but it is still a long way from being solved.

y = w^T x

hope this helps!

our brain is a black box, we accept that. (and control the outcomes with procedures, checklists, etc)

It feels like lots of prefessionals can't exactly explain every single aspect of how they do what they do, sometimes it just feels right.

What a vague and unprovable thing you've stated there.

If it has just as low of a false negative rate as human-read mammograms, I see no issue. Feed it through the AI first before having a human check the positive results only. Save doctors' time when the scan is so clean that even the AI doesn't see anything fishy.

Alternatively, if it has a lower false positive rate, have doctors check the negative results only. If the AI sees something then it's DEFINITELY worth a biopsy. Then have a human doctor check the negative readings just to make sure they don't let anything that's worth looking into go unnoticed.

Either way, as long as it isn't worse than humans in both kinds of failures, it's useful at saving medical resources.

an image recognition model like this is usually tuned specifically to have a very low false negative (well below human, often) in exchange for a high false positive rate (overly cautious about cancer)!

This is exactly what is being done. My eldest child is in a Ph. D. program for human - robot interaction and medical intervention, and has worked on image analysis systems in this field. They're intended use is exactly that - a "first look" and "second look". A first look to help catch the small, easily overlooked pre-tumors, and tentatively mark clear ones. A second look to be a safety net for tired, overworked, or outdated eyes.

Nice comment. I like the detail.

For me, the main takeaway doesn't have anything to do with the details though, it's about the true usefulness of AI. The details of the implementation aren't important, the general use case is the main point.

You in QA?

HAHAHAHA thank fuck I am not

Ok, I'll concede. Finally a good use for AI. Fuck cancer.

It's got a decent chunk of good uses. It's just that none of those are going to make anyone a huge ton of money, so they don't have a hype cycle attached. I can't wait until the grifters get out and the hype cycle falls away, so we can actually get back to using it for what it's good at and not shoving it indiscriminately into everything.

The hypesters and grifters do not prevent AI from being used for truly valuable things even now. In fact medical uses will be one of those things that WILL keep AI from just fading away.

Just look at those marketing wankers as a cherry on the top that you didn't want or need.

Also, for GPU prices to come down. Right now the AI garbage is eating a lot of the GPU production, as well as wasting a ton of energy. It sucks. Right as the crypto stuff started dying out we got AI crap.

Those are going to make a ton of money for a lot of people. Every 1% fuel efficiency gained, every second saved in an industrial process, it's hundreds of millions of dollars.

You don't need AI in your fridge or in your snickers, that will (hopefully) die off, but AI is not going away where it matters.

A cure for cancer, if it can be literally nipped in the bud, seems like a possible money-maker to me.

Honestly they should go back to calling useful applications ML (that is what it is) since AI is getting such a bad rap.

I once had ideas about building a machine learning program to assist workflows in Emergency Departments, and its' training data would be entirely generated by the specific ER it's deployed in. Because of differences in populations, the data is not always readily transferable between departments.

machine learning is a type of AI. scifi movies just misused the term and now the startups are riding the hype trains. AGI =/= AI. there's lots of stuff to complain about with ai these days like stable diffusion image generation and LLMs, but the fact that they are AI is simply true.

And if we weren't a big, broken mess of late stage capitalist hellscape, you or someone you know could have actually benefited from this.

Yea none of us are going to see the benefits. Tired of seeing articles of scientific advancement that I know will never trickle down to us peasants.

Our clinics are already using ai to clean up MRI images for easier and higher quality reads. We use ai on our cath lab table to provide a less noisy image at a much lower rad dose.

... they said, typing on a tiny silicon rectangle with access to the whole of humanity's knowledge and that fits in their pocket...

I'm involved in multiple projects where stuff like this will be used in very accessible manners, hopefully in 2-3 years, so don't get too pessimistic.

I can do that too, but my rate of success is very low

This is similar to wat I did for my masters, except it was lung cancer.

Stuff like this is actually relatively easy to do, but the regulations you need to conform to and the testing you have to do first are extremely stringent. We had something that worked for like 95% of cases within a couple months, but it wasn't until almost 2 years later they got to do their first actual trial.

pretty sure iterate is the wrong word choice there

They probably meant reiterate

I think it's a joke, like to imply they want to not just reiterate, but rerererereiterate this information, both because it's good news and also in light of all the sucky ways AI is being used instead. Like at first they typed, "I just want to reiterate.. but decided that wasn't nearly enough.

Common case of programmer brain

That's not the only issue with the English-esque writing.

100% true, just the first thing that stuck out at me

I suppose they just dropped the "re" off of "reiterate" since they're saying it for the first time.

Dude needs to use AI to fix his fucking grammar.

This is a great use of tech. With that said I find that the lines are blurred between "AI" and Machine Learning.

Real Question: Other than the specific tuning of the recognition model, how is this really different from something like Facebook automatically tagging images of you and your friends? Instead of saying "Here's a picture of Billy (maybe) " it's saying, "Here's a picture of some precancerous masses (maybe)".

That tech has been around for a while (at least 15 years). I remember Picasa doing something similar as a desktop program on Windows.

I've been looking at the paper, some things about it:

Good stuff

It's because AI is the new buzzword that has replaced "machine learning" and "large language models", it sounds a lot more sexy and futuristic.

Besides LLMs, large language models, we also have GANs, Generative Adversarial Networks.

https://en.wikipedia.org/wiki/Large_language_model

https://en.wikipedia.org/wiki/Generative_adversarial_network

Kinda mean of you calling Billy precancerous masses like that smh

I don't care about mean but I would call it inaccurate. Billy is already cancerous, He's mostly cancer. He's a very dense, sour boy.

Everything machine learning will be called "ai" from now until forever.

It's like how all rc helicopters and planes are now "drones"

People en masse just can't handle the nuance of language. They need a dumb word for everything that is remotely similar.

Where is the meme?

Well in Turkish, meme beans boob/breast.

The ai we got is the meme

Serious question: is there a way to get access to medical imagery as a non-student? I would love to do some machine learning with it myself, as I see lot’s of potential in image analysis in general. 5 years ago I created a model that was able to spot certain types of ships based only on satellite imagery, which were not easily detectable by eye and ignoring the fact that one human cannot scan 15k images in one hour. Similar use case with medical imagery - seeing the things that are not yet detectable by human eyes.

Yeah there are some openly available datasets on competition sites like Kaggle, and some medical data is available through public institutions like like NIH.

I knew about kaggle, but not about NIH. Thanks for the hint!

Yeah there is. A bloke I know did exactly that with brain scans for his masters.

Would you mind asking your friend, so you can provide the source?

5 years ago I created a model that was able to spot certain types of ships based only on satellite imagery, which were not easily detectable by eye and ignoring the fact that one human cannot scan 15k images in one hour.

what is your intended use case? are you trying to help government agencies perfect spying? sounds very cringe ngl

My intended use case is to find possibilities how ML can support people with certain tasks. Science is not political, for what my technology is abused, I cannot control. This is no reason to stop science entirely, there will always be someone abusing something for their own gain.

But thanks for assuming without asking first what the context was.

Yes, this is "how it was supposed to be used for".

The sentence construction quality these days in in freefall.

shrugs you know people have been confidently making these kinds of statements... since written language was invented? I bet the first person who developed written language did it to complain about how this generation of kids don't know how to write a proper sentence.

What is in freefall is the economy for the middle and working class and basic idea that artists and writers should be compensated, period. What has released us into freefall is that making art and crafting words are shit on by society as not a respectable job worth being paid a living wage for.

There are a terrifying amount of good writers out there, more than there have ever been, both in total number AND per capita.

This isn't a creative writing project. This isn't an artist presenting their work. What in the world did that tangent even come from?

This is just plain speech, written objectively incorrectly.

But go on, I'm sure next I'll be accused of all the problems of the writing industry or something.

Not everyone's a native speaker.

Bro, it's Twitter

And that excuses it I guess.

Btw, my dentist used AI to identify potential problems in a radiograph. The result was pretty impressive. Have to get a filling tho.

Much easier to assume the training data isn't garbage when the AI expert system only has a narrow scope, right?

Sure. And the expert interpretes still. But the result was exact.

Yeah, machine learning actually has a ton of very useful applications in things. It’s just predictably the dumbest and most toxic manifestations of it are hyped up in a capitalist system.

AI should be used for this, yes, however advertisement is more profitable.

It's worse than that.

This is a different type of AI that doesn't have as many consumer facing qualities.

The ones that are being pushed now are the first types of AI to have an actually discernable consumer facing attribute or behavior, and so they're being pushed because no one wants to miss the boat.

They're not more profitable or better or actually doing anything anyone wants for the most part, they're just being used where they can fit it in.

This type of segmentation is of declining practical value. Modern AI implementations are usually hybrids of several categories of constructed intelligence.

Neural networks are great for pattern recognition, unfortunately all the hype is in pattern generation and we end up with mammograms in anime style

Doctor: There seems to be something wrong with the image.

Technician: What's the problem?

Doctor: The patient only has two breasts, but the image that came back from the AI machine shows them having six breasts and much MUCH larger breasts than the patient actually has.

Technician: sighs

Why does the paperwork suddenly claim the patient is 600 years old shape shifting dragon?

No link or anything, very believable.

You could participate or complain.

https://news.mit.edu/2019/using-ai-predict-breast-cancer-and-personalize-care-0507

Complain to who? Some random twitter account? WHy would I do that?

Honestly this is a pretty good use case for LLMs and I've seen them used very successfully to detect infection in samples for various neglected tropical diseases. This literally is what AI should be used for.

Sure, agreed . Too bad 99% of it's use is still stealing from society to make a few billionaires richer.

LLMs do language, not images.

These models aren't LLM based.

I had a housemate a couple of years ago who had a side job where she'd look through a load of these and confirm which were accurate. She didn't say it was AI though.

For a little while ours was used for this. Covid too. Client was under an alias and wasn't with us long so no idea.

Can't pigeons do the same thing?

Not my proudest fap...

That's a challenging wank

Man I miss him.

Honestly with all respect that is really shitty joke. It’s god damn breast cancer, opposite of hot

I usually just skip them mouldy jokes but like cmon that is beyond the scale of cringe

Terrible things happen to people you love, you have two choices in this life. You can laugh about it or you can cry about it. You can do one and then the other if you choose. I prefer to laugh about most things and hope others will do the same. Cheers.

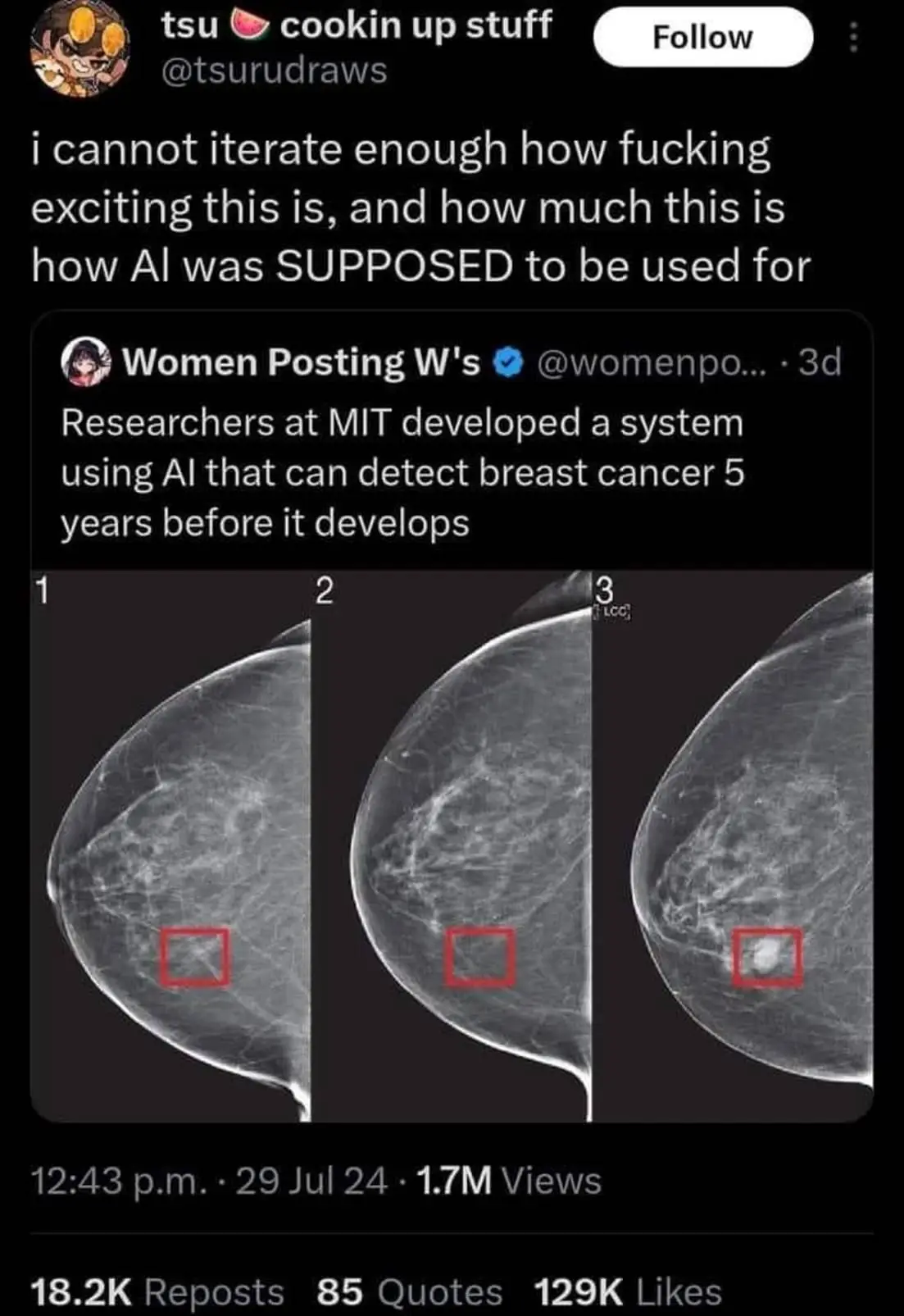

Ductal carcinoma in situ (DCIS) is a type of preinvasive tumor that sometimes progresses to a highly deadly form of breast cancer. It accounts for about 25 percent of all breast cancer diagnoses.

Because it is difficult for clinicians to determine the type and stage of DCIS, patients with DCIS are often overtreated. To address this, an interdisciplinary team of researchers from MIT and ETH Zurich developed an AI model that can identify the different stages of DCIS from a cheap and easy-to-obtain breast tissue image. Their model shows that both the state and arrangement of cells in a tissue sample are important for determining the stage of DCIS.

https://news.mit.edu/2024/ai-model-identifies-certain-breast-tumor-stages-0722

How soon could this diagnostic tool be rolled out? It sounds very promising given the seriousness of the DCIS!

Anything medical is slow but tools like this tend to get used by doctors not patients so it's much easier

As soon as your hospital system is willing to pay big money for it.

https://youtube.com/shorts/xIMlJUwB1m8?si=zH6eF5xZ5Xoz_zsz

Detecting is not enough to be useful.

The test is 90% accurate, thats still pretty useful. Especially if you are simply putting people into a high risk group that needs to be more closely monitored.

“90% accurate” is a non-statement. It’s like you haven’t even watched the video you respond to. Also, where the hell did you pull that number from?

How specific is it and how sensitive is it is what matters. And if Mirai in https://www.science.org/doi/10.1126/scitranslmed.aba4373 is the same model that the tweet mentions, then neither its specificity nor sensitivity reach 90%. And considering that the image in the tweet is trackable to a publication in the same year (https://news.mit.edu/2021/robust-artificial-intelligence-tools-predict-future-cancer-0128), I’m fairly sure that it’s the same Mirai.

The AI genie is out of the bottle and — as much as we complain — it isn't going away; we need thoughtful legislation. AI is going to take my job? Fine, I guess? That sounds good, really. Can I have a guaranteed income to live on, because I still need to live? Can we tax the rich?

Nooooooo you're supposed to use AI for good things and not to use it to generate meme images.

I think you mean mammary images?

I really wouldn't call this AI. It is more or less an inage identification system that relies on machine learning.

That was pretty much the definition of AI before LLM came.

And much before that it was rule-based machine learning, which was basically databases and fancy inference algorithms. So I guess "AI" has always meant "the most advanced computer science thing which looks kind of intelligent". It's only now that it looks intelligent enough to fool laypeople into thinking there actually is intelligence there.

Good news, but it's not "AI". Please stop calling it that.

it's ai, it all is. the code that controls where the creepers in Minecraft go? AI. the tiny little neural network that can detect numbers? also AI! is it AGI? no. it's still AI. it's not that modern tech is stealing the term ai, scifi movies are the ones that started misusing it and cash grab startups are riding the hypetrain.

Wanna bet it’s not “AI” ?

It's probably more "AI" than the LLMs we've been plagued with. This sounds more like an application of machine learning, which is a hell of a lot more promising.

AI and machine learning are very similar (if not identical) things, just one has been turned into a marketing hype word a whole lot more than the other.

Learning machines are ai as well, it's not really what we picture when we think ai but it is none the less.

This seems exactly like what I would have referred to as AI before the pandemic. Specifically Deep Learning image processing. In terms of something you can buy off the shelf this is theoretically something the Cognex Vidi Red Tool could be used for. My experience with it is in packaging, but the base concept is the same.

Training a model requires loading images into the software and having a human mark them before having a very powerful CUDA GPU process all of that. Once the model has been trained it can usually be run on a fairly modest PC in comparison.

Haha I love Gell-Mann amnesia. A few weeks ago there was news about speeding up the internet to gazillion bytes per nanosecond and it turned out to be fake.

Now this thing is all over the internet and everyone believes it.

The source paper is available online, is published in a peer reviewed journal, and has over 600 citations. I'm inclined to believe it.

You sound like a person who hasn't been peer reviewed

Well one reason is that this is basically exactly the thing current AI is perfect for - detecting patterns.

Fair weather friends to AI crack me up.