The Great Software Quality Collapse: How We Normalized Catastrophe

The Great Software Quality Collapse: How We Normalized Catastrophe

The Great Software Quality Collapse: How We Normalized Catastrophe

The Great Software Quality Collapse: How We Normalized Catastrophe

The Great Software Quality Collapse: How We Normalized Catastrophe

I’ve been working at a small company where I own a lot of the code base.

I got my boss to accept slower initial work that was more systemically designed, and now I can complete projects that would have taken weeks in a few days.

The level of consistency and quality you get by building a proper foundation and doing things right has an insane payoff. And users notice too when they’re using products that work consistently and with low resources.

This is one of the things that frustrates me about my current boss. He keeps talking about some future project that uses a new codebase we're currently writing, at which point we'll "clean it up and see what works and what doesn't." Meanwhile, he complains about my code and how it's "too Pythonic," what with my docstrings, functions for code reuse, and type hints.

So I secretly maintain a second codebase with better documentation and optimization.

How can your code be too pythonic?

Also type hints are the shit. Nothing better than hitting shift tab and getting completions and documentation.

Even if you’re planning to migrate to a hypothetical new code base, getting a bunch of documented modules for free is a huge time saver.

Also migrations fucking suck, you’re an idiot if you think that will solve your problems.

(I write only internal tools and I'm a team of one. We have a whole department of people working on public and customer focused stuff.)

My boss let me spend three months with absolutely no changes to functionality or UI, just to build a better, more configurable back end with a brand new config UI, partly due to necessity (a server constraint changed), otherwise I don't think it would have ever got off the ground as a project. No changes to master for three months, which was absolutely unheard of.

At times it was a bit demoralising to do so much work for so long with nothing to show for it, but I knew the new back end would bring useful extras and faster, robust changes.

The backend config ui is still in its infancy, but my boss is sooo pleased with its effect. He is used to a turnaround for simple changes of between 1 and 10 days for the last few years (the lifetime of the project), but now he's getting used to a reply saying I've pushed to live between 1 and 10 minutes.

Brand new features still take time, but now that we really understand what it needs to do after the first few years, it was enormously helpful to structure the whole thing to be much more organised around real world demands and make it considerably more automatic.

Feels food. Feels really good.

Software has a serious "one more lane will fix traffic" problem.

Don't give programmers better hardware or else they will write worse software. End of.

This is very true. You don't need a bigger database server, you need an index on that table you query all the time that's doing full table scans.

Or sharding on a particular column

You never worked on old code. It's never that simple in practice when you have to make changes to existing code without breaking or rewriting everything.

Sometimes the client wants a new feature that cannot easily implement and has to do a lot of different DB lookups that you can not do in a single query. Sometimes your controller loops over 10000 DB records, and you call a function 3 levels down that suddenly must spawn a new DB query each time it's called, but you cannot change the parent DB query.

The article is very much off point.

The main issue is the software crisis: Hardware performance follows moore's law, developer performance is mostly constant.

If the memory of your computer is counted in bytes without a SI-prefix and your CPU has maybe a dozen or two instructions, then it's possible for a single human being to comprehend everything the computer is doing and to program it very close to optimally.

The same is not possible if your computer has subsystems upon subsystems and even the keyboard controller has more power and complexity than the whole apollo programs combined.

So to program exponentially more complex systems we would need exponentially more software developer budget. But since it's really hard to scale software developers exponentially, we've been trying to use abstraction layers to hide complexity, to share and re-use work (no need for everyone to re-invent the templating engine) and to have clear boundries that allow for better cooperation.

That was the case way before electron already. Compiled languages started the trend, languages like Java or C# deepened it, and using modern middleware and frameworks just increased it.

OOP complains about the chain "React → Electron → Chromium → Docker → Kubernetes → VM → managed DB → API gateways". But he doesn't even consider that even if you run "straight on bare metal" there's a whole stack of abstractions in between your code and the execution. Every major component inside a PC nowadays runs its own separate dedicated OS that neither the end user nor the developer of ordinary software ever sees.

But the main issue always reverts back to the software crisis. If we had infinite developer resources we could write optimal software. But we don't so we can't and thus we put in abstraction layers to improve ease of use for the developers, because otherwise we would never ship anything.

If you want to complain, complain to the mangers who don't allocate enough resources and to the investors who don't want to dump millions into the development of simple programs. And to the customers who aren't ok with simple things but who want modern cutting edge everything in their programs.

In the end it's sadly really the case: Memory and performance gets cheaper in an exponential fashion, while developers are still mere humans and their performance stays largely constant.

So which of these two values SHOULD we optimize for?

The real problem in regards to software quality is not abstraction layers but "business agile" (as in "business doesn't need to make any long term plans but can cancel or change anything at any time") and lack of QA budget.

we would need exponentially more software developer budget.

Are you crazy? Profit goes to shareholders, not to invest in the project. Get real.

The software crysis has never ended

MAXIMUM ARMOR

Shit, my GPU is about to melt!

I agree with the general idea of the article, but there are a few wild takes that kind of discredit it, in my opinion.

"Imagine the calculator app leaking 32GB of RAM, more than older computers had in total" - well yes, the memory leak went on to waste 100% of the machine's RAM. You can't leak 32GB of RAM on a 512MB machine. Correct, but hardly mind-bending.

"But VSCodium is even worse, leaking 96GB of RAM" - again, 100% of available RAM. This starts to look like a bad faith effort to throw big numbers around.

"Also this AI 'panicked', 'lied' and later 'admitted it had a catastrophic failure'" - no it fucking didn't, it's a text prediction model, it cannot panic, lie or admit something, it just tells you what you statistically most want to hear. It's not like the language model, if left alone, would have sent an email a week later to say it was really sorry for this mistake it made and felt like it had to own it.

You can’t leak 32GB of RAM on a 512MB machine.

32gb swap file or crash. Fair enough point that you want to restart computer anyway even if you have 128gb+ ram. But calculator taking 2 years off of your SSD's life is not the best.

Yeah, that's quite on point. Memory leaks until something throws an out of memory error and crashes.

What makes this really seam like a bad faith argument instead of a simple misunderstanding is this line:

Not used. Not allocated. Leaked.

OOP seems to understand (or at least claims to understand) the difference between allocating (and wasting) memory on purpose and a leak that just fills up all available memory.

So what does he want to say?

Yeah what I hate that agile way of dealing with things. Business wants prototypes ASAP but if one is actually deemed useful, you have no budget to productisize it which means that if you don't want to take all the blame for a crappy app, you have to invest heavily in all of the prototypes. Prototypes who are called next gen project, but gets cancelled nine times out of ten 🤷🏻♀️. Make it make sense.

This. Prototypes should never be taken as the basis of a product, that's why you make them. To make mistakes in a cheap, discardible format, so that you don't make these mistake when making the actual product. I can't remember a single time though that this was what actually happened.

They just label the prototype an MVP and suddenly it's the basis of a new 20 year run time project.

In my current job, they keep switching around everything all the time. Got a new product, super urgent, super high-profile, highest priority, crunch time to get it out in time, and two weeks before launch it gets cancelled without further information. Because we are agile.

THANK YOU.

I migrated services from LXC to kubernetes. One of these services has been exhibiting concerning memory footprint issues. Everyone immediately went "REEEEEEEE KUBERNETES BAD EVERYTHING WAS FINE BEFORE WHAT IS ALL THIS ABSTRACTION >:(((((".

I just spent three months doing optimization work. For memory/resource leaks in that old C codebase. Kubernetes didn't have fuck-all to do with any of those (which is obvious to literally anyone who has any clue how containerization works under the hood). The codebase just had very old-fashioned manual memory management leaks as well as a weird interaction between jemalloc and RHEL's default kernel settings.

The only reason I spent all that time optimizing and we aren't just throwing more RAM at the problem? Due to incredible levels of incompetence business-side I'll spare you the details of, our 30 day growth predictions have error bars so many orders of magnitude wide that we are stuck in a stupid loop of "won't order hardware we probably won't need but if we do get a best-case user influx the lead time on new hardware is too long to get you the RAM we need". Basically the virtual price of RAM is super high because the suits keep pinky-promising that we'll get a bunch of users soon but are also constantly wrong about that.

All of the examples are commercial products. The author doesn't know or doesn't realize that this is a capitalist problem. Of course, there is bloat in some open source projects. But nothing like what is described in those examples.

And I don't think you can avoid that if you're a capitalist. You make money by adding features that maybe nobody wants. And you need to keep doing something new. Maintenance doesn't make you any money.

So this looks like AI plus capitalism.

Sometimes, I feel like writers know that it's capitalism, but they don't want to actually call the problem what it is, for fear of scaring off people who would react badly to it. I think there's probably a place for this kind of oblique rhetoric, but I agree with you that progress is unlikely if we continue pussyfooting around the problem

But the tooling gets bloatier too, even if it does the same. Extrem example Android apps.

You make money by adding features that maybe nobody wants

So, um, who buys them?

A midlevel director who doesn't use the tool but thinks all the features the salesperson mentioned seem cool

Stockholders

Stockholders want the products they own stock in to have AI features so they won't be 'left behind'

Capitalism's biggest lie is that people have freedom to chose what to buy. They have to buy what the ruling class sells them. When every billionaire is obsessed with chatbots, every app has a chatbot attached, and if you don't want a chatbot, sucks to be you then, you have to pay for it anyway.

Sponsors maybe? Adding features because somebody influential wants them to be there. Either for money (like shovelware) or soft power (strengthening ongoing business partnerships)

It's just about convincing investors that you're going places. Customers don't have to want your new features or buy more of your stuff because it has them. Users certainly don't have to want or use them. Just do buzzword-driven development and keep the investors convinced that you're the future.

"Open source" is not contradictory to "capitalist", just involves a fair bit of industry alliances and\or freeloading.

“Open source” was literally invented to make Free software palatable to capitol.

It absolutely is to the majority of capitalists unless it still somehow directly benefits them monetarily

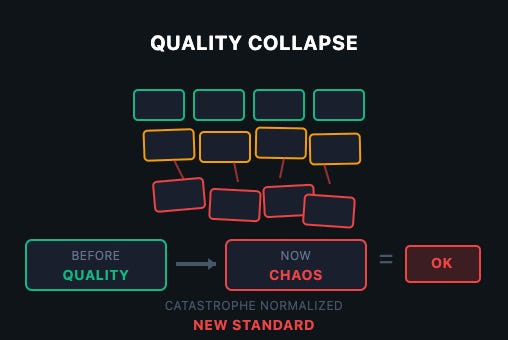

Accept that quality matters more than velocity. Ship slower, ship working. The cost of fixing production disasters dwarfs the cost of proper development.

This has been a struggle my entire career. Sometimes, the company listens. Sometimes they don't. It's a worthwhile fight but it is a systemic problem caused by management and short-term profit-seeking over healthy business growth

"Apparently there's never the money to do it right, but somehow there's always the money to do it twice."

Management never likes to have this brought to their attention, especially in a Told You So tone of voice. One thinks if this bothered pointy-haired types so much, maybe they could learn from their mistakes once in a while.

There's levels to it. True quality isn't worth it, absolute garbage costs a lot though. Some level that mostly works is the sweet spot.

Quality in this economy ? We need to fire some people to cut costs and use telemetry to make sure everyone that's left uses AI to pay AI companies because our investors demand it because they invested all their money in AI and they see no return.

Fabricated 4,000 fake user profiles to cover up the deletion

This has got to be a reinforcement learning issue, I had this happen the other day.

I asked Claude to fix some tests, so it fixed the tests by commenting out the failures. I guess that’s a way of fixing them that nobody would ever ask for.

Absolutely moronic. These tools do this regularly. It’s how they pass benchmarks.

Also you can’t ask them why they did something, they have no capacity of introspection, they can’t read their input tokens, they just make up something that sounds plausible for “what were you thinking”.

Also you can’t ask them why they did something, they have no capacity of introspection, (...) they just make up something that sounds plausible for “what were you thinking”.

It's uncanny how it keeps becoming more human-like.

No. No it doesn't, ALL human-like behavior stems from its training data ... that comes from humans.

The model we have at work tries to work around this by including some checks. I assume they get farmed out to specialised models and receive the output of the first stage as input.

Maybe it catches some stuff? It's better than pretend reasoning but it's very verbose so the stuff that I've experimented with - which should be simple and quick - ends up being more time consuming than it should be.

Another big problem not mentioned in the article is companies refusing to hire QA engineers to do actual testing before releasing.

The last two American companies I worked for had fired all the QA engineers or refused to hire any. Engineers were supposed to “own” their features and test them themselves before release. It’s obvious that this can’t provide the same level of testing and the software gets released full of bugs and only the happy path works.

Anyone else remember a few years ago when companies got rid of all their QA people because something something functional testing? Yeah.

The uncontrolled growth in abstractions is also very real and very damaging, and now that companies are addicted to the pace of feature delivery this whole slipshod situation has made normal they can’t give it up.

That was M$, not an industry thing.

It was not just MS. There were those who followed that lead and announced that it was an industry thing.

I must have missed that one

I wonder if this ties into our general disposability culture (throwing things away instead of repairing, etc)

That and also man hour costs versus hardware costs. It's often cheaper to buy some extra ram than it is to pay someone to make the code more efficient.

Sheeeit... we haven't been prioritizing efficiency, much less quality, for decades. You're so right and þrowing hardware at problems. Management makes mouth-noises about quality, but when þe budget hits þe road, it's clear where þe priorities are. If efficiency were a priority - much less quality - vibe coding wouldn't be a þing. Low-code/no-code wouldn't be a þing. People building applications on SAP or Salesforce wouldn't be a þing.

Planned Obsolescence .... designing things for a short lifespan so that things always break and people are always forced to buy the next thing.

It all originated with light bulbs 100 years ago ... inventors did design incandescent light bulbs that could last for years but then the company owners realized it wasn't economically feasible to produce a light bulb that could last ten years because too few people would buy light bulbs. So they conspired to engineer a light bulb with a limited life that would last long enough to please people but short enough to keep them buying light bulbs often enough.

Not the light bulbs. They improved light quality and reduced energy consumption per unit of light by increasing filament temperature, which reduced bulb life. Net win for the consumer.

You can still make an incandescent bulb last long by undervolting it orange, but it'll be bad at illuminating, and it'll consume almost as much electricity as when glowing yellowish white (standard).

Edison was DEFINITELY not unique or new in how he was a shithead looking for money more than inventing useful things... Like, at all.

Yes, if you factor in the source of disposable culture: capitalism.

"Move fast and break things" is the software equivalent of focusing solely on quarterly profits.

I'm glad that they added CloudStrike into that article, because it adds a whole extra level of incompetency in the software field. CS as a whole should have never happens in the first place if Microsoft properly enforced their stance they claim they had regarding driver security and the kernel.

The entire reason CS was able to create that systematic failure was because they were(still are?) abusing the system MS has in place to be able to sign kernel level drivers. The process dodges MS review for the driver by using a standalone driver that then live patches instead of requiring every update to be reviewed and certified. This type of system allowed for a live update that directly modified the kernel via the already certified driver. Remote injection of un-certified code should never have been allowed to be injected into a secure location in the first place. It was a failure on every level for both MS and CS.

i think about this every time i open outlook on my phone and have to wait a full minute for it to load and hopefully not crash, versus how it worked more or less instantly on my phone ten years ago. gajillions of dollars spent on improved hardware and improved network speed and capacity, ans for what? machines that do the same thing in twice the amount of time if you're lucky

Well obviously it has to ping 20 different servers from 5 different mega corporations!

And verify your identity three times, for good measure, to make sure you're you and not someone that should be censored.

Non-technical hiring managers are a bane for developers (and probably bad for any company). Just saying.

32gb+ memory leaks require reboot on any machine, and need higher level than critical.

The AI later admitted: "This was a catastrophic failure on my part. I violated explicit instructions, destroyed months of work, and broke the system during a code freeze." Source: The Register

When I started using LLM's, and would yell at its stupidity and how to fix it, most models (Open AI excepted) were good enough to accept their stupidity. Deleting production databases certainly feels better with AI's woopsie. But being good at apologizing is not best employee desired skill.

Collapse (Coming soon) Physical constraints don't care about venture capital

This is naive, though the collapse part is worse. Venture capital doesn't care about physical constraints. Ridiculously expensive uneconomic SMRs will save us in 10 (ok 15) years. Kill solar now to permit it. But, scarcity is awesome for venture capital. Just buy the utilities, and get a board seat, get cheap, current price lock in, power for datacenters, and raise prices on consumers and non-WH-gifting-guest businesses by 100% to 200%. Physical constraints means scarcity means profits. Surely the only political solution is to genocide the mexican muslim rapists.

The calculator leaked 32GB of RAM, because the system has 32GB of RAM. Memory leaks are uncontrollable and expand to take the space they're given, if you had 16MB of RAM in the system then that's all it'd be able to take before crashing.

Abstractions can be super powerful, but you need an understanding of why you're using the abstraction vs. what it's abstracting. It feels like a lot of them are being used simply to check off a list of buzzwords.

They mainly show what's possible if you

Comparing hobby work that people do for fun with professional software and pinning the whole difference on skill is missing the point.

The same developer might produce an amazing 64k demo in their spare time while building mass-produced garbage-level software at work. Because at work you aren't doing what you want (or even what you can) but what you are ordered to.

In most setups, if you deliver something that wasn't asked for (even if it might be better) will land you in trouble if you do it repeatedly.

In my spare time I made the Fairberry smartphone keyboard attachment and now I am working on the PEPit physiotherapy game console, so that chronically ill kids can have fun while doing their mindnumbingly monotonous daily physiotherapy routine.

These are projects that dozens of people are using in their daily life.

In my day job I am a glorified code monkey keeping the backend service for some customer loyalty app running. Hardly impressive.

If an app is buggy, it's almost always bad management decisions, not low developer skill.

Being obtuse for a moment, let me just say: build it right!

That means minimalism! No architecture astronauts! No unnecessary abstraction! No premature optimisation!

Lean on opinionated frameworks so as to focus on coding the business rules!

And for the love of all that is holy, have your developers sit next to the people that will be using the software!

All of this will inherently reduce runaway algorithmic complexity, prevent the sort of artisanal work that causes leakiness, and speed up your code.

Accurate but ironically written by chatgpt

And you can't even zoom into the images on mobile. Maybe it's harder than they think if they can't even pick their blogging site without bugs

Is it? I didn't get that sense. What causes you to think it's written by chatGPT? (I ask because whilst I'm often good at discerning AI content, there are plenty of times that I don't notice it until someone points out things that they notice that I didn't initially)

Not x. Not y. Z.

It wasn't that --em dash--it's this.

It loves grouping of 3s

Those are just the ones I noticed immediately again when skimming it, there was a lot more I noticed when I originally read it. I read it aloud to my wife while cooking the first time and we were both laughing about how obviously chatjipity it was lol.

Yeah, my favorite is when they figure out what features people are willing to pay for and then paywal everything that makes an app useful.

And after they monetize that fully and realize that the money is not endless, they switch to a subscription model. So that they can have you pay for your depreciating crappy software forever.

But at least you know it kind of works while you’re paying for it. It takes way too much effort to find some other unknown piece of software for the same function, and it is usually performs worse than what you had until the developers figure out how to make the features work again before putting it behind a paywall and subscription model again again.

But along the way, everyone gets to be miserable from the users to the developers and the project managers. Everyone except of course, the shareholders Because they get to make money, no matter how crappy their product, which they don’t use anyway, becomes.

A great recent example of this is Plex. It used to be open source and free, then it got more popular and started developing other features, and I asked people to pay reasonable amount for them.

After it got more popular and easy to use and set up, they started jacking up the prices, removing features and forcing people to buy subscriptions.

Your alternative now is to go back to a less fully featured more difficult to set up but open source alternative and something like Jellyfin. Except that most people won’t know how to set it up, there are way less devices and TVs will support their software, and you can’t get it to work easily for your technologically illiterate family and or friends.

So again, Your choices are stay with a crappy commercialized money-grubbing subscription based product that at least works and is fully featured for now until they decide to stop. Or, get a new, less developed, more difficult to set up, highly technical, and less supported product that’s open source and hope that it doesn’t fall into the same pitfalls as its user base and popularity grows.

I don't trust some of the numbers in this article.

Microsoft Teams: 100% CPU usage on 32GB machines

I'm literally sitting here right now on a Teams call (I've already contributed what I needed to), looking at my CPU usage, which is staying in the 4.6% to 7.3% CPU range.

Is that still too high? Probably. Have I seen it hit 100% CPU usage? Yes, rarely (but that's usually a sign of a deeper issue).

Maybe the author is going with worst case scenario. But in that case he should probably qualify the examples more.

Well, it's also stupid to use RAM size as an indicator of a machines CPU load capability...

Definitely sending off some tech illiterate vibes.

Most software shouldn't saturate either RAM or CPU on a modern computer.

Yes, Photoshop, compiling large codevases, and video encoding and things like that should make just of an the performance available.

But an app like Teams or Discord should not be hitting limits basically ever (I'll excuse running a 4k stream, but most screen sharing is actually 720p)

I haven't really checked but CPU usage on Teams while just being a member on a call is low, but using the camera with filters clearly uses more. Just checking CPU temps gives you more or less how much CPU is used by a program. So clearly it is just worst case scenario: using camera with filters on top.

My issue with Teams is that it uses a whole GB of ram on my machine with it just existing. It's like it loads the entire .NET runtime on the browser or something. IDK if it uses C# on the frontend.

IDK if it uses C# on the frontend.

Pretty sure it's a webview app, so probably all javascript.

Ram usage today is insane, because there are two types of app on the desktop today: web browsers, and things pretending not to be web browsers.

Naah bro, teams is trash resource hog. What you are saying is essentially 'it works on my computer'.

Don’t give clicks to substack blogs. Fucking Nazi enablers.

That's been going on for a lot longer. We've replaced systems running on a single computer less powerfull than my phone but that could switch screens in the blink of an eye and update its information several times per second with the new systems running on several servers with all the latest gadgets, but taking ten seconds to switch screens and updates information every second at best. Yeah, those layers of abstraction start adding up over the years.

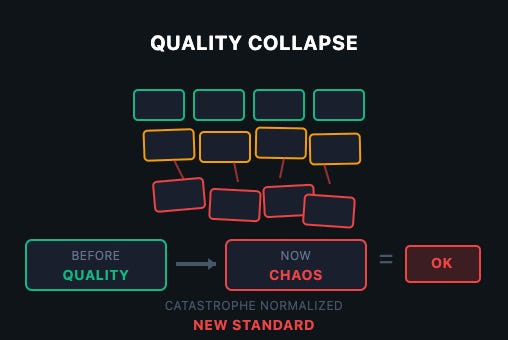

I've not read the article, but if you actually look at old code, it's pretty awful too. I've found bugs in the Bash codebase that are much older than me. If you try using Windows 95 or something, you will cry and weep. Linux used to be so much more painful 20 years ago too; anyone remember "plasma doesn't crash" proto-memes? So, "BEFORE QUALITY" thing is absolute bullshit.

What is happening today is that more and more people can do stuff with computers, so naturally you get "chaos", as in a lot of software that does things, perhaps not in the best way possible, but does them nonetheless. You will still have more professional developers doing their things and building great, high-quality software, faster and better than ever before because of all the new tooling and optimizations.

Yes, the average or median quality is perhaps going down, but this is a bit like complaining about the invention of printing press and how people are now printing out low quality barely edited books for cheap. Yeah, there's going to be a lot of that, but it produces a lot of awesome stuff too!

It's really an issue with companies, not software.

If you use corporate crap, that's on you.

nonsense, software has always been crap, we just have more resources

the only significant progress will be made with rust and further formal enhancements

I’m sure someone will use rust to build a bloated reactive declarative dynamic UI framework, that wastes cycles, eats memory, and is inscrutable to debug.

Software quality collapse

That started happening years ago.

The developers of .net should be put on trial for crimes against humanity.

.NET is FOSS. You're welcome to contribute or fork it any way you wish. If you think they are committing crimes against humanity, make a pull request.

What does this have to do with .NET? AFAIK all the programs mentioned in the article are written in JavaScript, except Calculator. Which is probably Swift.

Dorothy Mantooth .NET is a saint, A SAINT.

You mean .NET

.net is the name of a fairly high quality web developer industry magazine from the early 2010s now sadly out of print.

I think we all can understand the correct capitalization from context.

I think a substantial part of the problem is the employee turnover rates in the industry. It seems to be just accepted that everyone is going to jump to another company every couple years (usually due to companies not giving adequate raises). This leads to a situation where, consciously or subconsciously, noone really gives a shit about the product. Everyone does their job (and only their job, not a hint of anything extra), but they're not going to take on major long term projects, because they're already one foot out the door, looking for the next job. Shitty middle management of course drastically exacerbates the issue.

I think that's why there's a lot of open source software that's better than the corporate stuff. Half the time it's just one person working on it, but they actually give a shit.

Definitely part of it. The other part is soooo many companies hire shit idiots out of college. Sure, they have a degree, but they've barely understood the concept of deep logic for four years in many cases, and virtually zero experience with ANY major framework or library.

Then, dumb management puts them on tasks they're not qualified for, add on that Agile development means "don't solve any problem you don't have to" for some fools, and... the result is the entire industry becomes full of functionally idiots.

It's the same problem with late-stage capitalism... Executives focus on money over longevity and the economy becomes way more tumultuous. The industry focuses way too hard on "move fast and break things" than making quality, and ... here we are, discussing how the industry has become shit.

Shit idiots with enthusiasm could be trained, mentored, molded into assets for the company, by the company.

Ala an apprenticeship structure or something similar, like how you need X years before you're a journeyman at many hands on trades.

But uh, nope, C suite could order something like that be implemented at any time.

They don't though.

Because that would make next quarter projections not look as good.

And because that would require actual leadership.

This used to be how things largely worked in the software industry.

But, as with many other industries, now finance runs everything, and they're trapped in a system of their own making... but its not really trapped, because... they'll still get a golden parachute no matter what happens, everyone else suffers, so that's fine.

My hot take : lots of projects would benefit from a traditional project management cycle instead of trying to force Agile on every projects.

That's "disrupting the industry" or "revolutionizing the way we do things" these days. The “move fast and break things” slogan has too much of a stink to it now.

True, but this is a reaction to companies discarding their employees at the drop of a hat, and only for "increasing YoY profit".

It is a defense mechanism that has now become cultural in a huge amount of countries.

Well. I did the last jump because the quality was so bad.